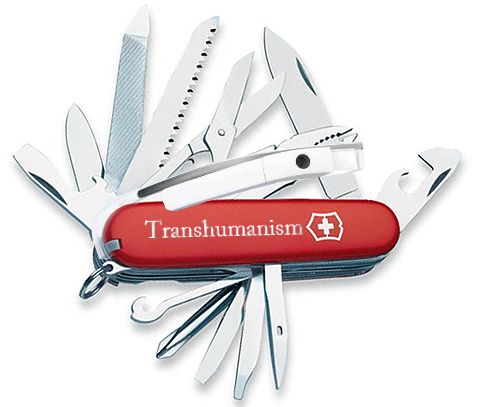

A widely accepted definition of Transhumanism is: The ethical use of all kinds of technology for the betterment of the human condition.

This all encompassing summation is a good start as an elevator pitch to laypersons, were they to ask for an explanation. Practitioners and contributors to the movement, of course, know how to branch this out into specific streams: science, philosophy, politics and more.

- This article was originally published on ImmortalLife.info

We are in the midst of a technological revolution, and it is cool to proclaim that one is a Transhumanist. Yet, many intelligent and focused Transhumanists are asking some all important questions: What road-map have we drawn out, and what concrete steps are we taking to bring to fruition, the goals of Transhumanism?

Transhumanism could be looked at as culminating in Technological Singularity. People comprehend the meaning of Singularity differently. One such definition: Singularity marks a moment when technology trumps the human brain, and the limitations of the mind are surpassed by artificial intelligence. Being an Author and not a scientist myself, my definition of the Singularity is colored by creative vision. I call it Dirrogate Singularity.

I see us humans, successfully and practically, harnessing the strides we’ve made in semiconductor tech and neural networks, Artificial intelligence, and digital progress in general over the past century, to create Digital Surrogates of ourselves — our Dirrogates. In doing so, humans will reach pseudo-God status and will be free to merge with these creatures they have made in their own likeness…attaining, Dirrogate Singularity.

So, how far into the future will this happen? Not very far. In fact it can commence as soon as today or as far as, in a couple of years. The conditions and timing are right for us to “trans-form” into Digital Beings; Dirrogates.

I’ll use excerpts from the story ‘Memories with Maya’ to seed ideas for a possible road-map to Dirrogate Singularity, while keeping the tenets of Transhumanism in focus on the dashboard as we steer ahead. As this text will deconstruct many parts of the novel, major spoilers are unavoidable.

Dirrogate Singularity v/s The Singularity:

The main distinction in definition I make is: I don’t believe Singularity is the moment when technology trumps the human brain. I believe Singularity is when the human mind accepts and does not discriminate between an advanced “Transhuman” (effectively, a mind upload living in a bio-mechanical body) and a “Natural” (an un-amped homo sapien)

This could be seen as a different interpretation of the commonly accepted concept of The Singularity. As one of the aims of this essay is to create a possible road-map to seed ideas for the Transhumanism movement, I choose to look at a wholly digital path to Transhumanism, bypassing human augmentation via nanotechnology, prosthetics or cyborg-ism. As we will see further down, Dirrogate Singularity could slowly evolve into the common accepted definitions of Technological Singularity.

What is a Dirrogate:

A portmanteau of Digital + Surrogate. An excerpt from the novel explains in more detail:

“Let’s run the beta of our social interaction module outside.”

Krish asked the prof to follow him to the campus ground in front of the food court. They walked out of the building and approached a shaded area with four benches. As they were about to sit, my voice came through the phone’s speaker. “I’m on your far right.”

Krish and the prof turned, scanning through the live camera view of the phone until they saw me waving. The phone’s compass updated me on their orientation. I asked them to come closer.

“You have my full attention,” the prof said. “Explain…”

“So,” Krish said, in true geek style… “Dan knows where we are, because my phone is logged in and registered into the virtual world we have created. We use a digital globe to fly to any location. We do that by using exact latitude and longitude coordinates.” Krish looked at the prof, who nodded. “So this way we can pick any location on Earth to meet at, provided of course, I’m physically present there.”

“I understand,” said the prof. “Otherwise, it would be just a regular online multi-player game world.”

“Precisely,” Krish said. “What’s unique here is a virtual person interacting with a real human in the real world. We’re now on the campus Wifi.” He circled his hand in front of his face as though pointing out to the invisible radio waves. “But it can also use a high-speed cell data network. The phone’s GPS, gyro, and accelerometer updates as we move.”

Krish explained the different sensor data to Professor Kumar. “We can use the phone as a sophisticated joystick to move our avatar in the virtual world that, for this demo, is a complete and accurate scale model of the real campus.”

The prof was paying rapt attention to everything Krish had to say. “I laser scanned the playground and the food-court. The entire campus is a low rez 3D model,” he said. “Dan can see us move around in the virtual world because my position updates. The front camera’s video stream is also mapped to my avatar’s face, so he can see my expressions.”

“Now all we do is not render the virtual buildings, but instead, keep Daniel’s avatar and replace it with the real-world view coming in through the phone’s camera,” explained Krish.

“Hmm… so you also do away with render overhead and possibly conserve battery life?” the prof asked.

“Correct. Using GPS, camera and marker-less tracking algorithms, we can update our position in the virtual world and sync Dan’s avatar with our world.”

“And we haven’t even talked about how AI can enhance this,” I said.

I walked a few steps away from them, counting as I went.

“We can either follow Dan or a few steps more and contact will be broken. This way in a social scenario, virtual people can interact with humans in the real world,” Krish said. I was nearing the personal space out of range warning.

“Wait up, Dan,” Krish called.

I stopped. He and the prof caught up.

“Here’s how we establish contact,” Krish said. He touched my avatar on the screen. I raised my hand in a high-five gesture.

“So only humans can initiate contact with these virtual people?” asked the prof.

“Humans are always in control,” I said. They laughed.

“Aap Kaise ho?” Krish said.

“Main theek hoo,” I answered a couple of seconds later, much to the surprise of the prof.

“The AI module can analyze voice and cross-reference it with a bank of ten languages.” he said. “Translation is done the moment it detects a pause in a sentence. This way multicultural communication is possible. I’m working on some features for the AI module. It will be based on computer vision libraries to study and recognize eyebrows and facial expressions. This data stream will then be accessible to the avatar’s operator to carry out advanced interaction with people in the real world–”

“So people can have digital versions of themselves and do tasks in locations where they cannot be physically present,” the prof completed Krish’s sentence.

“Cannot or choose not to be present and in several locations if needed,” I said. “There is no reason we can’t own several digital versions of ourselves doing tasks simultaneously.”

“Each one licensed with a unique digital fingerprint registered with the government or institutions offering digital surrogate facilities.” Krish said.

“We call them di-rro-gates.” I said.

One of the characters in the story also says: “Humans are creatures of habit.” and, “We live our lives following the same routine day after day. We do the things we do with one primary motivation–comfort.”

Whether this is entirely true or not, there is something to think about here… What does ‘improving the human condition’ imply? To me Comfort, is high on the list and a major motivation. If people can spawn multiple Dirrogates of themselves that can interact with real people wearing future iterations of Google Glass (for lack of a more popular word for Augmented Reality visors)… then the journey on the road-map to Dirrogate Singularity is to see a few case examples of Dirrogate interaction.

Evangelizing Transhumanism:

In writing the novel, I took several risks, story length being one. I’ve attempted to keep the philosophy subtle, almost hidden in the story, and judging by reviews on sites such as GoodReads.com, it is plain to see that many of today’s science fiction readers are after cliff hanger style science fiction and gravitate toward or possibly expect a Dystopian future. This root craving must be addressed in lay people if we are to make Transhumanism as a movement, succeed.

I’d noticed comments made that the sex did not add much to the story. No one (yet) has delved deeper to see if there was a reason for the sex scenes and if there was an underlying message. The success of Transhumanism is going to be in large scale understanding and mass adoption of the values of the movement by laypeople. Google Glass will make a good case study in this regard. If they get it wrong, Glass will quickly share the same fate and ridicule as wearing blue-tooth headsets.

One of the first things, in my view, to improving the human condition, is experiencing pleasure… of every kind, especially carnal.

In that sense, we already are Digital Transhumans. Long distance video calls, teledildonics and recent mainstream offerings such as Durex’s “Fundawear” can bring physical, emotional and psychological comfort to humans, without the traditional need for physical proximity or human touch.

(Durex’s Fundawear – Image Courtesy Snapo.com)

These physical stimulation and pleasure giving devices add a whole new meaning to ‘wearable computing’. Yet, behind every online Avatar, every Dirrogate, is a human operator. Now consider: What if one of these “Fundawear” sessions were recorded?

The data stream for each actuator in the garment, stored in a file – a feel-stream, unique to the person who created it? We could then replay this and experience or reminisce the signature touch of a loved one at any time…even long after they are gone; are no more. Would such as situation qualify as a partial or crude “Mind upload”?

Mind Uploading – A practical approach.

Using Augmented Reality hardware, a person can see and experience interaction with a Dirrogate, irrespective if the Dirrogate is remotely operated by a human, or driven by prerecorded subroutines under playback control of an AI. Mind uploading [at this stage of our technological evolution] does not have to be a full blown simulation of the mind.

Consider the case of a Google Car. Could it be feasible that a human operator remotely ‘drive’ the car with visual feedback from the car’s on-board environment analysis cameras? Any AI in the car could be used on an as-needed basis. Now this might not be the aim of a driver-less car, and why would you need your Dirrogate to physically drive when in essence you could tele-travel to any location?

Human Shape Shifters:

Reasons could be as simple as needing to transport physical cargo to places where home delivery is not offered. Your Dirrogate could drive the car. Once at the location [hardware depot], your Dirrogate could merge with the on-board computer of an articulated motorized shopping cart. Check out counter staff sees your Dirrogate augmented in the real world via their visor. You then steer the cart to the parking lot, load in cargo [via the cart’s articulated arm or a helper] and drive home. In such a scenario, a mind upload has swapped physical “bodies” as needed, to complete a task.

If that use made your eyes roll…here’s a real life example:

[youtube_sc url=“http://www.youtube.com/watch?v=sJaYS1xkDEU” autohide=“1”]

Devon Carrow, a 2nd grader has a life threatening illness that keeps him away from school. He sends his “avatar” a robot called Vigo.

In the case of a Dirrogate, if the classroom teacher wore an AR visor, she could “see” Devon’s Dirrogate sitting at his desk. A mechanical robot body would be optional. An overhead camera could project the entire Augmented classroom so all children could be aware of his presence. As AR eye-wear becomes more affordable, individual students could interact with Dirrogates. Such use of Dirrogates do fit in completely with the betterment-of-the-human-condition argument, especially if the Dirrogate operator is a human who could come into harm’s way in the real world.

While we simultaneously work on longevity and eliminating deadly diseases, both noble causes, we have to come to terms with the fact that biology has one up on us in the virus department as of today. Epidemic outbreaks such as SARS can keep schools closed. Would it not make sense to maintain the communal ethos of school attendance and classroom interaction by transhumanizing ourselves…digitally?

Does the above example qualify as Mind Uploading? Not in the traditional definition of the term. But looking at it from a different perspective, the 2nd grader has uploaded his mind to a robot.

Dirrogate Immortality via Quantum Archeology:

Below is a passage from the story. The literal significance of which, casual readers of science fiction miss out on:

“Look at her,” I said. “I don’t want her to be a just a memory. I want to keep her memory alive. That day, the Wizer was part of the reason for three deaths. Today, it’s keeping me from dying inside.”

“Help me, Krish,” I said. “Help me keep her memory alive.” He was listening. He wiped his eyes with his hands. I took the Wizer off. “Put it back on,” he said.

A closer look at the Wizer – [visor with Augmented Intelligence built in.]

The preceding excerpt from the story talks about resurrecting her; digital-cryonics.

So, how would Quantum Archeology techniques be applied to resurrect a dead person? Every day we spend hours uploading our stream-of-consciousness to the “cloud”. Photos, videos, Instagrams, Facebook status updates, tweets. All of this is data that can be and is being mined by Deep Learning systems. There’s no prize for guessing who the biggest investor and investigator of Deep Learning is.

Quantum Archeology gets a helping hand with all the digital breadcrumbs we’re leaving around us in this century. The question is: Is that enough information for us to Create a Mind?

Mind Uploading – Libraries and Subroutines:

A more relevant question to ask is, should we attempt to build a mind from the ground up, or start by collecting subroutines and libraries unique to a particular person? Earlier on in the article, it was suggested that by recording a ‘Fundawear’ session, we could re-experience someone’s signature intimate touch. Using Deep Learning, can personality libraries be built?

A related question to answer is: Wouldn’t it make everything ‘artificial’ and be a degraded version of the original? To attempt to answer such a question, let’s look around us today. Aren’t we already degrading our sense of hearing for instance, when we listen to hour after hour of MP3 music sampled at 128kHz or less? How about every time we’ve come to rely on Google’s “did you mean” or Microsoft’s red squiggly line to correct even our simple spellings?

Now, it gets interesting… since we have mind upload “libraries”, we are at liberty to borrow subroutines from more accomplished humans, to augment our own intelligence.

Will the near future allow us to choose a Dirrogate partner with the creative thinking of one person’s personality upload, the intimate skill-set of another and… you get the picture. Most people lead routine 9 to 5 lives. That does not mean that they are not missed by loved ones after they have completed their biological life-cycle. Resurrecting or simulating such minds is much easier than say re-animating Einstein.

In the story, Krish, on digitally resurrecting his father recounts:

“After I saw Maya, I had to,” he said. “I’ve used her same frame structure for the newspaper reading. Last night I went through old photos, his things, his books,” his voice was low. “I’m feeding them into the frame. This was his life for the past two years before the cancer claimed him. Every evening he would sit in this chair in the old house and read his paper.”

I listened in silence as he spoke. Tactile receptors weren’t needed to experience pain. Tone of voice transported those spores just as easily.

“It was easy to create a frame for him, Dan,” he said. “In the time that the cancer was eating away at him, the day’s routine became more predictable. At first he would still go to work, then come home and spend time with us. Then he couldn’t go anymore and he was at home all day. I knew his routine so well it took me 15 minutes to feed it in. There was no need for any random branches.”

I turned to look at him. The Wizer hid his eyes well. “Krish,” I said. “You know what the best part about having him back is? It does not have to be the way it was. You can re-define his routine. Ask your mom what made your dad happy and feed that in. Build on old memories, build new ones and feed those in. You’re the AI designer… bend the rules.”

“I dare not show her anything like this,” he said. “She would never understand. There’s something not right about resurrecting the dead. There’s a reason why people say rest in peace.”

Who is the real Transhuman?

Is it a person who has augmented their physical self or augmented one of their five primary senses? Or is it a human who has successfully re-wired their brain and their mind to accept another augmented human and the tenets of Transhumanism?

“He said perception is in the eye of the beholder… or something to that effect.”

“Maybe he said realism?” I offered.

“Yeah. Maybe. Turns out he is a believer and subscribes to the concept of transhumanism,” Krish said, adjusting the Wizer on the bridge of his nose. “He believes the catalyst for widespread acceptance of transhumanism has to be based on visual fidelity or the entire construct will be stymied by the human brain and mind.”

“Hmm… the uncanny valley effect? It has to be love at first sight, if we are to accept an augmented person huh.”

“Didn’t know you followed the movement,” he said.

“Look around us. Am I really here in person?”

“Point taken,” he said.

While taking the noble cause of Transhumanism forward, we have to address one truism that was put forward in the movie, The Terminator: “It’s in your nature to destroy yourselves.”

When we eventually reach a full mind-upload stage and have the ability to swap or borrow libraries from other ‘minds’, will personality traits of greed still be floating around as rogue libraries? Perhaps the common man is right – A Dystopian future is on the cards, that’s why science fiction writers gravitate toward dystopia worlds.

Could this change as we progress from transhuman to post-human?

In building a road-map for Transhumanism, we need to present and evangelize more to the common man in language and scenarios they can identify with. That is one of the main reasons Memories with Maya features settings and language that at times, borders on juvenile fiction. Concepts such as life extension, reversal of aging and immortality can be made to resound better with laypeople when presented in the right context. There is a reason that Vampire stories are on the nation’s best seller lists.

People are intrigued and interested in immortality.

Memories with Maya – The Dirrogate on Amazon: http://www.amazon.com/Memories-With-Maya-Clyde-Dsouza/dp/1482514885

For more on the science used in the book, visit: Http://www.dirrogate.com