Services to include spotting racial bias, developing guidelines around AI projects.

Denis Shiryaev uses algorithms to colorize and sharpen old movies, bumping them up to a smooth 60 frames per second. The result is a stunning glimpse at the past.

This is a working proof of concept of the NeuralLace. It can detect limb motion and smell sensation via neural implants in the brain. So far it’s only been tested in pigs, but an early usecase might be a digital replacement for spinal cord injuries. But the real future goal about is getting to implant an internet enabled AI into your brain.

Elon Musk showed off Neuralink’s new implantable brain chip and demonstrated it working in real time on a pig.

CNET playlists: https://www.youtube.com/user/CNETTV/playlists

Download the new CNET app: https://cnet.app.link/GWuXq8ExzG

Like us on Facebook: https://www.facebook.com/cnet

Follow us on Twitter: https://www.twitter.com/cnet

Follow us on Instagram: http://bit.ly/2icCYYm

The White House on Wednesday will announce that federal agencies and their private sector partners are committing more than $1 billion over the next five years to establish 12 new research institutes focused on artificial intelligence and quantum information sciences.

The effort is designed to ensure the U.S. remains globally competitive in AI and quantum technologies, administration officials said.

Why underwater robots are vacuuming up lionfish.

This tiny battery could change the game for micro robots.

Abstract: Advances in high speed imaging techniques have opened new possibilities for capturing ultrafast phenomena such as light propagation in air or through media. Capturing light-in-flight in 3-dimensional xyt-space has been reported based on various types of imaging systems, whereas reconstruction of light-in-flight information in the fourth dimension z has been a challenge. We demonstrate the first 4-dimensional light-in-flight imaging based on the observation of a superluminal motion captured by a new time-gated megapixel single-photon avalanche diode camera. A high resolution light-in-flight video is generated with no laser scanning, camera translation, interpolation, nor dark noise subtraction. A machine learning technique is applied to analyze the measured spatio-temporal data set. A theoretical formula is introduced to perform least-square regression, and extra-dimensional information is recovered without prior knowledge. The algorithm relies on the mathematical formulation equivalent to the superluminal motion in astrophysics, which is scaled by a factor of a quadrillionth. The reconstructed light-in-flight trajectory shows a good agreement with the actual geometry of the light path. Our approach could potentially provide novel functionalities to high speed imaging applications such as non-line-of-sight imaging and time-resolved optical tomography.

One of the biggest impediments to adoption of new technologies is trust in AI.

Now, a new tool developed by USC Viterbi Engineering researchers generates automatic indicators if data and predictions generated by AI algorithms are trustworthy. Their research paper, “There Is Hope After All: Quantifying Opinion and Trustworthiness in Neural Networks” by Mingxi Cheng, Shahin Nazarian and Paul Bogdan of the USC Cyber Physical Systems Group, was featured in Frontiers in Artificial Intelligence.

Neural networks are a type of artificial intelligence that are modeled after the brain and generate predictions. But can the predictions these neural networks generate be trusted? One of the key barriers to adoption of self-driving cars is that the vehicles need to act as independent decision-makers on auto-pilot and quickly decipher and recognize objects on the road—whether an object is a speed bump, an inanimate object, a pet or a child—and make decisions on how to act if another vehicle is swerving towards it.

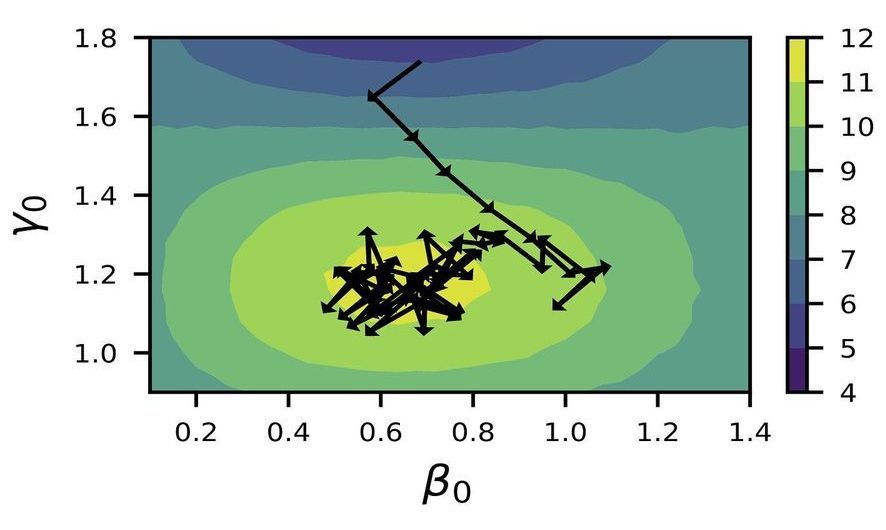

Recent advancements in quantum computing have driven the scientific community’s quest to solve a certain class of complex problems for which quantum computers would be better suited than traditional supercomputers. To improve the efficiency with which quantum computers can solve these problems, scientists are investigating the use of artificial intelligence approaches.

In a new study, scientists at the U.S. Department of Energy’s (DOE) Argonne National Laboratory have developed a new algorithm based on reinforcement learning to find the optimal parameters for the Quantum Approximate Optimization Algorithm (QAOA), which allows a quantum computer to solve certain combinatorial problems such as those that arise in materials design, chemistry and wireless communications.

“Combinatorial optimization problems are those for which the solution space gets exponentially larger as you expand the number of decision variables,” said Argonne computer scientist Prasanna Balaprakash. “In one traditional example, you can find the shortest route for a salesman who needs to visit a few cities once by enumerating all possible routes, but given a couple thousand cities, the number of possible routes far exceeds the number of stars in the universe; even the fastest supercomputers cannot find the shortest route in a reasonable time.”

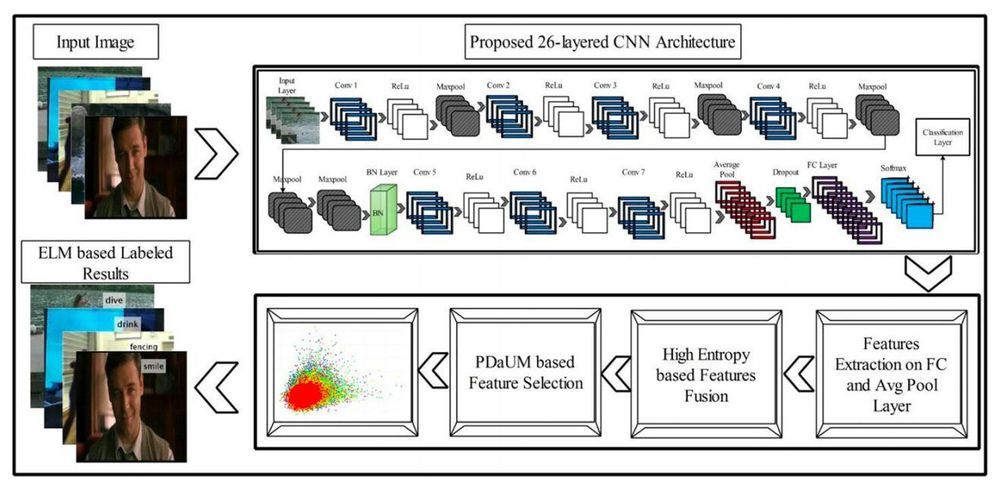

Deep learning algorithms, such as convolutional neural networks (CNNs), have achieved remarkable results on a variety of tasks, including those that involve recognizing specific people or objects in images. A task that computer scientists have often tried to tackle using deep learning is vision-based human action recognition (HAR), which specifically entails recognizing the actions of humans who have been captured in images or videos.

Researchers at HITEC University and Foundation University Islamabad in Pakistan, Sejong University and Chung-Ang University in South Korea, University of Leicester in the UK, and Prince Sultan University in Saudi Arabia have recently developed a new CNN for recognizing human actions in videos. This CNN, presented in a paper published in Springer Link’s Multimedia Tools and Applications journal, was trained to differentiate between several different human actions, including boxing, clapping, waving, jogging, running and walking.

“We designed a new 26-layered convolutional neural network (CNN) architecture for accurate complex action recognition,” the researchers wrote in their paper. “The features are extracted from the global average pooling layer and fully connected (FC) layer and fused by a proposed high entropy-based approach.”