“Companies around the world are bracing themselves for an avalanche of cyber security regulation, as governments scramble to introduce rules forcing corporate groups to build stronger defences against catastrophic hacks.”

“Companies around the world are bracing themselves for an avalanche of cyber security regulation, as governments scramble to introduce rules forcing corporate groups to build stronger defences against catastrophic hacks.”

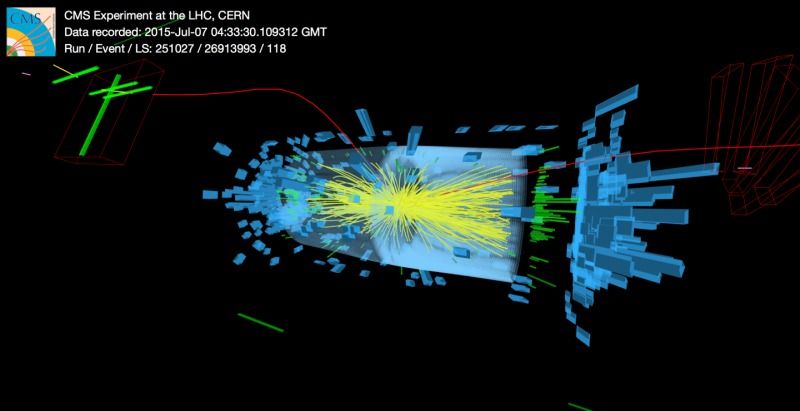

July, 2015; as you know.. was the all systems go for the CERNs Large Hadron Collider (LHC). On a Saturday evening, proton collisions resumed at the LHC and the experiments began collecting data once again. With the observation of the Higgs already in our back pocket — It was time to turn up the dial and push the LHC into double digit (TeV) energy levels. From a personal standpoint, I didn’t blink an eye hearing that large amounts of Data was being collected at every turn. BUT, I was quite surprised to learn at the ‘Amount’ being collected and processed each day — About One Petabyte.

Approximately 600 million times per second, particles collide within the (LHC). The digitized summary is recorded as a “collision event”. Physicists must then sift through the 30 petabytes or so of data produced annually to determine if the collisions have thrown up any interesting physics. Needless to say — The Hunt is On!

The Data Center processes about one Petabyte of data every day — the equivalent of around 210,000 DVDs. The center hosts 11,000 servers with 100,000 processor cores. Some 6000 changes in the database are performed every second.

[youtube_sc url=“https://youtu.be/0mgXNgD3JFU?list=PL7DEC46BD7058D7BB”]

With experiments at CERN generating such colossal amounts of data. The Data Center stores it, and then sends it around the world for analysis. CERN simply does not have the computing or financial resources to crunch all of the data on site, so in 2002 it turned to grid computing to share the burden with computer centres around the world. The Worldwide LHC Computing Grid (WLCG) – a distributed computing infrastructure arranged in tiers – gives a community of over 8000 physicists near real-time access to LHC data. The Grid runs more than two million jobs per day. At peak rates, 10 gigabytes of data may be transferred from its servers every second.

By early 2013 CERN had increased the power capacity of the centre from 2.9 MW to 3.5 MW, allowing the installation of more computers. In parallel, improvements in energy-efficiency implemented in 2011 have led to an estimated energy saving of 4.5 GWh per year.

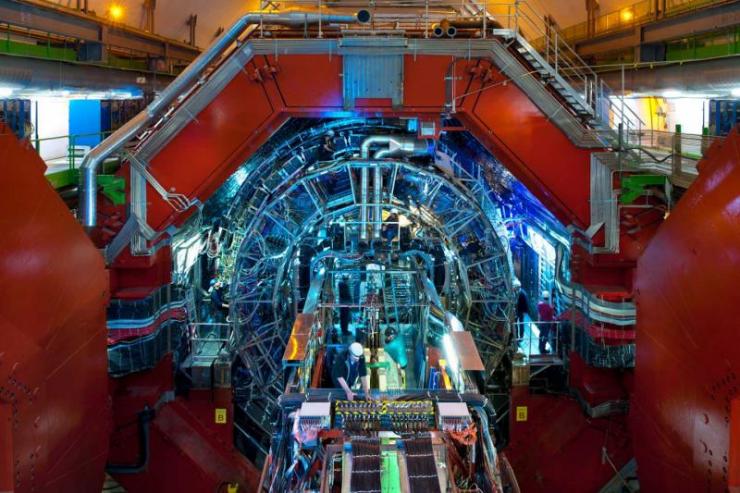

Image: CERN

[youtube_sc url=“https://youtu.be/jDC3-QSiLB4”]

PROCESSING THE DATA (processing info via CERN)> Subsequently hundreds of thousands of computers from around the world come into action: harnessed in a distributed computing service, they form the Worldwide LHC Computing Grid (WLCG), which provides the resources to store, distribute, and process the LHC data. WLCG combines the power of more than 170 collaborating centres in 36 countries around the world, which are linked to CERN. Every day WLCG processes more than 1.5 million ‘jobs’, corresponding to a single computer running for more than 600 years.

The data flow from all four experiments for Run 2 is anticipated to be about 25 GB/s (gigabyte per second)

In July, the LHCb experiment reported observation of an entire new class of particles:

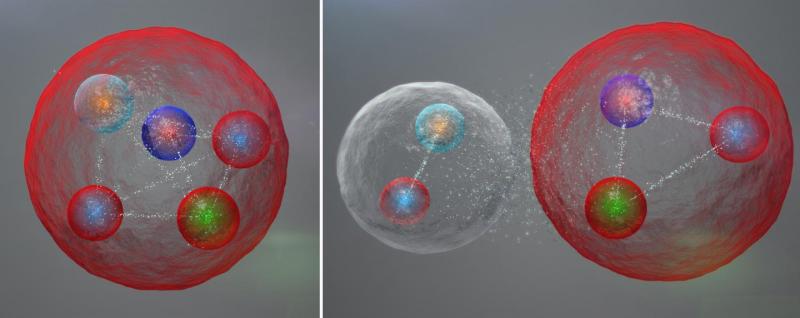

Exotic Pentaquark Particles (Image: CERN)

Possible layout of the quarks in a pentaquark particle. The five quarks might be tightly bound (left). The five quarks might be tightly bound. They might also be assembled into a meson (one quark and one anti quark) and a baryon (three quarks), weakly bound together.

The LHCb experiment at CERN’s LHC has reported the discovery of a class of particles known as pentaquarks. In short, “The pentaquark is not just any new particle,” said LHCb spokesperson Guy Wilkinson. “It represents a way to aggregate quarks, namely the fundamental constituents of ordinary protons and neutrons, in a pattern that has never been observed before in over 50 years of experimental searches. Studying its properties may allow us to understand better how ordinary matter, the protons and neutrons from which we’re all made, is constituted.”

[youtube_sc url=“https://youtu.be/02uOm5Kl5ls”]

Our understanding of the structure of matter was revolutionized in 1964 when American physicist Murray Gell-Mann proposed that a category of particles known as baryons, which includes protons and neutrons, are comprised of three fractionally charged objects called quarks, and that another category, mesons, are formed of quark-antiquark pairs. This quark model also allows the existence of other quark composite states, such as pentaquarks composed of four quarks and an antiquark.

Until now, however, no conclusive evidence for pentaquarks had been seen.

Earlier experiments that have searched for pentaquarks have proved inconclusive. The next step in the analysis will be to study how the quarks are bound together within the pentaquarks.

“The quarks could be tightly bound,” said LHCb physicist Liming Zhang of Tsinghua University, “or they could be loosely bound in a sort of meson-baryon molecule, in which the meson and baryon feel a residual strong force similar to the one binding protons and neutrons to form nuclei.” More studies will be needed to distinguish between these possibilities, and to see what else pentaquarks can teach us!

August 18th, 2015

CERN Experiment Confirms Matter-Antimatter CPT Symmetry

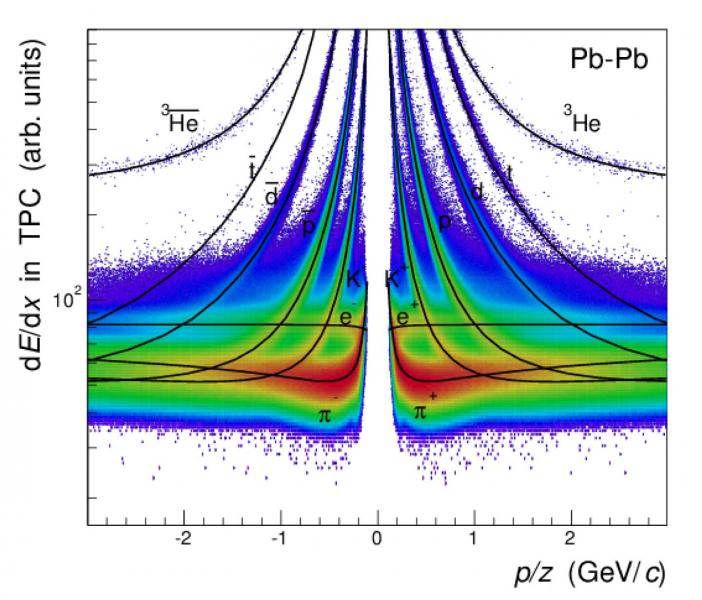

For Light Nuclei, Antinuclei (Image: CERN)

Days after scientists at CERN’s Baryon-Antibaryon Symmetry Experiment (BASE) measured the mass-to-charge ratio of a proton and its antimatter particle, the antiproton, the ALICE experiment at the European organization reported similar measurements for light nuclei and antinuclei.

The measurements, made with unprecedented precision, add to growing scientific data confirming that matter and antimatter are true mirror images.

Antimatter shares the same mass as its matter counterpart, but has opposite electric charge. The electron, for instance, has a positively charged antimatter equivalent called positron. Scientists believe that the Big Bang created equal quantities of matter and antimatter 13.8 billion years ago. However, for reasons yet unknown, matter prevailed, creating everything we see around us today — from the smallest microbe on Earth to the largest galaxy in the universe.

Last week, in a paper published in the journal Nature, researchers reported a significant step toward solving this long-standing mystery of the universe. According to the study, 13,000 measurements over a 35-day period show — with unparalleled precision – that protons and antiprotons have identical mass-to-charge ratios.

The experiment tested a central tenet of the Standard Model of particle physics, known as the Charge, Parity, and Time Reversal (CPT) symmetry. If CPT symmetry is true, a system remains unchanged if three fundamental properties — charge, parity, which refers to a 180-degree flip in spatial configuration, and time — are reversed.

The latest study takes the research over this symmetry further. The ALICE measurements show that CPT symmetry holds true for light nuclei such as deuterons — a hydrogen nucleus with an additional neutron — and antideuterons, as well as for helium-3 nuclei — two protons plus a neutron — and antihelium-3 nuclei. The experiment, which also analyzed the curvature of these particles’ tracks in ALICE detector’s magnetic field and their time of flight, improve on the existing measurements by a factor of up to 100.

IN CLOSING..

A violation of CPT would not only hint at the existence of physics beyond the Standard Model — which isn’t complete yet — it would also help us understand why the universe, as we know it, is completely devoid of antimatter.

UNTIL THEN…

ORIGINAL ARTICLE POSTING via Michael Phillips LinkedIN Pulse @ http://goo.gl/ApdTL6

“It would have been crazy to say just a few years ago. But today, Google produces better-designed software than any other tech behemoth. If you don’t believe that, then set down your Apple-flavored Kool-Aid. Take a cleansing breath, open your mind, and compare Android and iOS.”

The recent scandal involving the surveillance of the Associated Press and Fox News by the United States Justice Department has focused attention on the erosion of privacy and freedom of speech in recent years. But before we simply attribute these events to the ethical failings of Attorney General Eric Holder and his staff, we also should consider the technological revolution powering this incident, and thousands like it. It would appear that bureaucrats simply are seduced by the ease with which information can be gathered and manipulated. At the rate that technologies for the collection and fabrication of information are evolving, what is now available to law enforcement and intelligence agencies in the United States, and around the world, will soon be available to individuals and small groups.

We must come to terms with the current information revolution and take the first steps to form global institutions that will assure that our society, and our governments, can continue to function through this chaotic and disconcerting period. The exponential increase in the power of computers will mean that changes the go far beyond the limits of slow-moving human government. We will need to build new institutions to the crisis that are substantial and long-term. It will not be a matter that can be solved by adding a new division to Homeland Security or Google.

We do not have any choice. To make light of the crisis means allowing shadowy organizations to usurp for themselves immense power through the collection and distortion of information. Failure to keep up with technological change in an institutional sense will mean that in the future government will be at best a symbolic façade of authority with little authority or capacity to respond to the threats of information manipulation. In the worst case scenario, corporations and government agencies could degenerate into warring factions, a new form of feudalism in which invisible forces use their control of information to wage murky wars for global domination.

No degree of moral propriety among public servants, or corporate leaders, can stop the explosion of spying and the propagation of false information that we will witness over the next decade. The most significant factor behind this development will be Moore’s Law which stipulates that the number of microprocessors that can be placed economically on a chip will double every 18 months (and the cost of storage has halved every 14 months) — and not the moral decline of citizens. This exponential increase in our capability to gather, store, share, alter and fabricate information of every form will offer tremendous opportunities for the development of new technologies. But the rate of change of computational power is so much faster than the rate at which human institutions can adapt — let alone the rate at which the human species evolves — that we will face devastating existential challenges to human civilization.

The Challenges we face as a result of the Information Revolution

The dropping cost of computational power means that individuals can gather gigantic amounts of information and integrate it into meaningful intelligence about thousands, or millions, of individuals with minimal investment. The ease of extracting personal information from garbage, recordings of people walking up and down the street, taking aerial photographs and combining then with other seemingly worthless material and then organizing it in a meaningful manner will increase dramatically. Facial recognition, speech recognition and instantaneous speech to text will become literally child’s play. Inexpensive, and tiny, surveillance drones will be readily available to collect information on people 24/7 for analysis. My son recently received a helicopter drone with a camera as a present that cost less than $40. In a few years elaborate tracking of the activities of thousands, or millions, of people will become literally child’s play. Continue reading “The Impending Crisis of Data: Do We Need a Constitution of Information?” | >