A team of researchers using the Microsoft HoloLens mixed reality platform has created what is believed to be the first interactive holographic mapping system, e.

From the understated opulence of a Bentley to the stalwart family minivan to the utilitarian pickup, Americans know that the car you drive is an outward statement of personality. You are what you drive, as the saying goes, and researchers at Stanford have just taken that maxim to a new level.

Using computer algorithms that can see and learn, they have analyzed millions of publicly available images on Google Street View. The researchers say they can use that knowledge to determine the political leanings of a given neighborhood just by looking at the cars on the streets.

“Using easily obtainable visual data, we can learn so much about our communities, on par with some information that takes billions of dollars to obtain via census surveys. More importantly, this research opens up more possibilities of virtually continuous study of our society using sometimes cheaply available visual data,” said Fei-Fei Li, an associate professor of computer science at Stanford and director of the Stanford Artificial Intelligence Lab and the Stanford Vision Lab, where the work was done.

But Ford appears to have found a unique way to use a robot. We’ve seen some interesting applications for Boston Dynamics’ Spot robot, and the latest takes the 70-pound dog-like robot to the floors of a Ford transmission manufacturing plant.

These plants are reportedly so old — and have been re-tooled so many times — that Ford is unsure as to whether it possesses accurate floor plans. With an end goal of modernizing and retooling these plants, Ford is using Spot’s laser scanning and imaging technology to travel the plants so they can produce a detailed map.

According to TechCrunch, the manual facility mapping process is time-intensive, with lots of stops and starts as cameras are set up and repositioned station to station. By using two continuously roving robots, Ford can do the job in about half the time. The other benefit is Spot’s size: these little critters can access areas that humans can’t easily get to, and with five cameras they can sometimes provide a more complete picture of their surroundings.

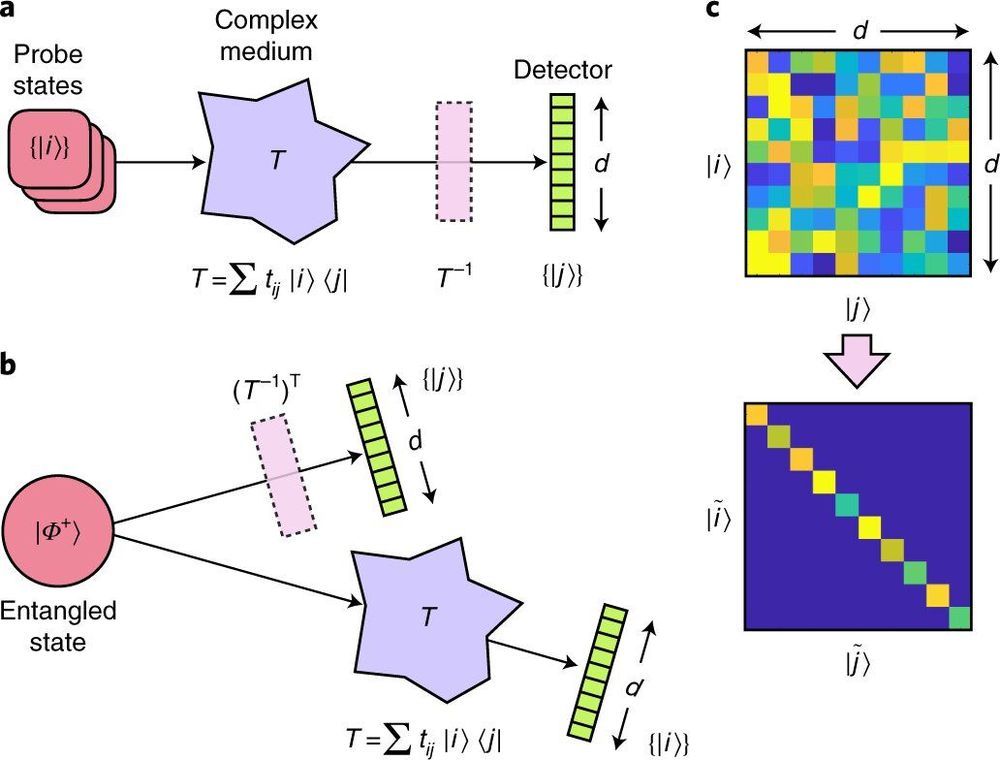

A team of researchers from Heriot-Watt University, the Indian Institute of Technology and the University of Glasgow has demonstrated a way to transport entangled particles through a commercial fiber cable with 84.4% fidelity. In their paper published in the journal Nature Physics, the group describes using a unique attribute of entanglement to achieve such high fidelity. Andrew Forbes and Isaac Nape with the University of Witwatersrand have published a News & Views piece in the same journal issue outlining issues with sending entangled particles across fiber cables and the work done by the team in this new effort.

The study of entanglement, its properties and possible uses has made headlines due to its novelty and possible applications —particularly in quantum computers. One of the roadblocks standing in the way of its use as an international computer communications medium is noise encountered along the path through fiber cables that destroys the information they carry. In this new effort, the researchers have found a possible solution to the problem—using a unique attribute of entanglement to reduce losses due to noise.

The work exploited a property of quantum physics that allows for mapping the medium (fiber cable) onto the quantum state of a particle moving through it. In essence, the entangled state of a particle (or photon in this context) created an image of the fiber cable, which allowed for reversing the scattering within it as a photon was transmitted. And furthermore, the descrambling could be achieved without having anything touch either the fiber or the photon that moved through it. More specifically, the researchers sent one of a pair of photons through a complex medium, but not the other. Both were then directed toward spatial light modulators and then on to detectors, and then finally to a device used to correlate coincidence counting. In their setup, light from the photon that did not pass through the complex medium propagated backward from the detector, allowing the photon to appear as if it had emerged from the crystal as the other photon.

But have you ever wondered: how well do those maps represent my brain? After all, no two brains are alike. And if we’re ever going to reverse-engineer the brain as a computer simulation—as Europe’s Human Brain Project is trying to do—shouldn’t we ask whose brain they’re hoping to simulate?

Enter a new kind of map: the Julich-Brain, a probabilistic map of human brains that accounts for individual differences using a computational framework. Rather than generating a static PDF of a brain map, the Julich-Brain atlas is also dynamic, in that it continuously changes to incorporate more recent brain mapping results. So far, the map has data from over 24,000 thinly sliced sections from 23 postmortem brains covering most years of adulthood at the cellular level. But the atlas can also continuously adapt to progress in mapping technologies to aid brain modeling and simulation, and link to other atlases and alternatives.

In other words, rather than “just another” human brain map, the Julich-Brain atlas is its own neuromapping API—one that could unite previous brain-mapping efforts with more modern methods.

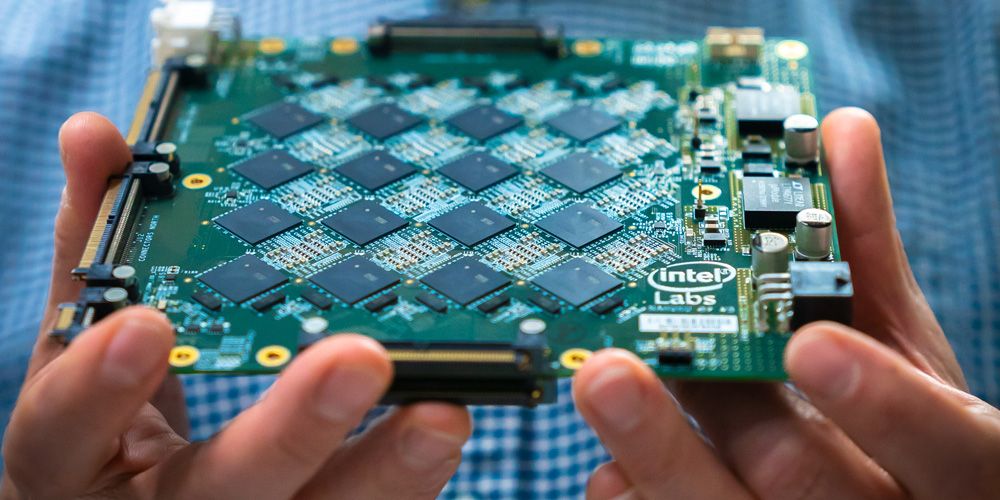

“We are impressed with the early results demonstrated as we scale Loihi to create more powerful neuromorphic systems. Pohoiki Beach will now be available to more than 60 ecosystem partners, who will use this specialized system to solve complex, compute-intensive problems.” –Rich Uhlig, managing director of Intel Labs

Why It’s Important: With the introduction of Pohoiki Beach, researchers can now efficiently scale up novel neural-inspired algorithms — such as sparse coding, simultaneous localization and mapping (SLAM), and path planning — that can learn and adapt based on data inputs. Pohoiki Beach represents a major milestone in Intel’s neuromorphic research, laying the foundation for Intel Labs to scale the architecture to 100 million neurons later this year.

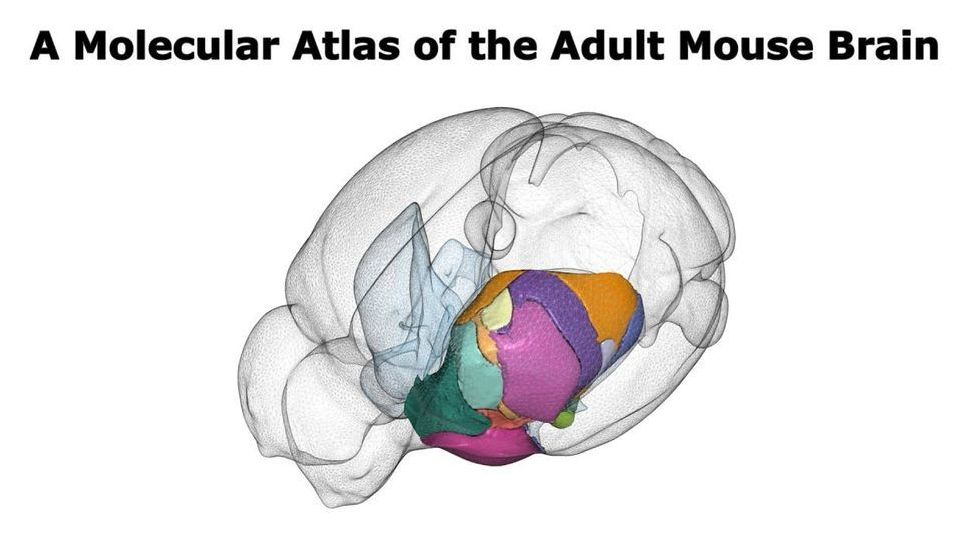

In a new study researchers at Karolinska Institutet and KTH Royal Institute of Technology have developed a new kind of brain atlas based on an innovative method of mapping brain tissue into areas according to their molecular profile. The study is published in Science Advances.

Although many other observatories, including NASA’s Hubble Space Telescope, have previously created “deep fields” by staring at small areas of the sky for significant chunks of time, the Cosmic Evolution Early Release Science (CEERS) Survey, led by Steven L. Finkelstein of the University of Texas at Austin, will be one of the first for Webb. He and his research team will spend just over 60 hours pointing the telescope at a slice of the sky known as the Extended Groth Strip, which was observed as part of Hubble’s Cosmic Assembly Near-infrared Deep Extragalactic Legacy Survey or CANDELS.

“With Webb, we want to do the first reconnaissance for galaxies even closer to the big bang,” Finkelstein said. “It is absolutely not possible to do this research with any other telescope. Webb is able to do remarkable things at wavelengths that have been difficult to observe in the past, on the ground or in space.”

Mark Dickinson of the National Science Foundation’s National Optical-Infrared Astronomy Research Laboratory in Arizona, and one of the CEERS Survey co-investigators, gives a nod to Hubble while also looking forward to Webb’s observations. “Surveys like the Hubble Deep Field have allowed us to map the history of cosmic star formation in galaxies within a half a billion years of the big bang all the way to the present in surprising detail,” he said. “With CEERS, Webb will look even farther to add new data to those surveys.”