Open source has emerged as a powerful set of principles for solving complex problems in fields as diverse as education and physical security. With roughly 60 million Americans suffering from a chronic health condition, traditional research progressing slowly, and personalized medicine on the horizon, the time is right to apply open source to health research. Advances in technology enabling cheap, massive data collection combined with the emerging phenomena of self quantification and crowdsourcing make this plan feasible today. We can all work together to cure disease, and here’s how.

Category: lifeboat

SRA Proposal Accepted

My proposal for the Society for Risk Analysis’s annual meeting in Boston has been accepted, in oral presentation format, for the afternoon of Wednesday, December 10th, 2008. Any Lifeboat members who will be in the area at the time are more than welcome to attend. Any suggestions for content would also be greatly appreciated; speaking time is limited to 15 minutes, with 5 minutes for questions. The abstract for the paper is as follows:

Global Risk: A Quantitative Analysis

The scope and possible impact of global, long-term risks presents a unique challenge to humankind. The analysis and mitigation of such risks is extremely important, as such risks have the potential to affect billions of people worldwide; however, little systematic analysis has been done to determine the best strategies for overall mitigation. Direct, case-by-case analysis can be combined with standard probability theory, particularly Laplace’s rule of succession, to calculate the probability of any given risk, the scope of the risk, and the effectiveness of potential mitigation efforts. This methodology can be applied both to well-known risks, such as global warming, nuclear war, and bio-terrorism, and lesser-known or unknown risks. Although well-known risks are shown to be a significant threat, analysis strongly suggests that avoiding the risks of technologies which have not yet been developed may pose an even greater challenge. Eventually, some type of further quantitative analysis will be necessary for effective apportionment of government resources, as traditional indicators of risk level- such as press coverage and human intuition- can be shown to be inaccurate, often by many orders of magnitude.

More details are available online at the Society for Risk Analysis’s website. James Blodgett will be presenting on the precautionary principle two days earlier (Monday, Dec. 8th).

30 days to make antibodies to limit Pandemics

For both ethical and practical reasons, monoclonals are usually made in mice. And that’s a problem, because the human immune system recognizes the mouse proteins as foreign and sometimes attacks them instead. The result can be an allergic reaction, and sometimes even death.

To get around that problem, researchers now “humanize” the antibodies, replacing some or all of mouse-derived pieces with human ones.

Wilson and Ahmed were interested in the immune response to vaccination. Conventional wisdom held that the B-cell response would be dominated by “memory” B cells. But as the study authors monitored individuals vaccinated against influenza, they found that a different population of B cells peaked about one week after vaccination, and then disappeared, before the memory cells kicked in. This population of cells, called antibody-secreting plasma cells (ASCs), is highly enriched for cells that target the vaccine, with vaccine-specific cells accounting for nearly 70 percent of all ASCs.

“That’s the trick,” said Wilson. “So instead of one cell in 1,000 binding to the vaccines, now it is seven in 10 cells.”

All of a sudden, the researchers had access to a highly enriched pool of antibody-secreting cells, something that is relatively easy to produce in mice, but hard to come by for human B cells.

To ramp up the production and cloning of these antibodies, the researchers added a second twist. Mouse monoclonal antibodies are traditionally produced in the lab from hybridomas, which are cell lines made by fusing the antibody-producing cell with a cancer cell. But human cells don’t respond well to this treatment. So Wilson and his colleagues isolated the ASC antibody genes and transferred them into an “immortalized” cell line. The result was the generation of more than 100 different monoclonals in less than a year, with each taking just a few weeks to produce.

In the event of an emerging flu pandemic, for instance, this approach could lead to faster production of human monoclonals to both diagnose and protect against the disease.

Journal Nature article: Rapid cloning of high-affinity human monoclonal antibodies against influenza virus

Nature 453, 667–671 (29 May 2008) | doi:10.1038/nature06890; Received 16 October 2007; Accepted 4 March 2008; Published online 30 April 2008

Pre-existing neutralizing antibody provides the first line of defence against pathogens in general. For influenza virus, annual vaccinations are given to maintain protective levels of antibody against the currently circulating strains. Here we report that after booster vaccination there was a rapid and robust influenza-specific IgG+ antibody-secreting plasma cell (ASC) response that peaked at approximately day 7 and accounted for up to 6% of peripheral blood B cells. These ASCs could be distinguished from influenza-specific IgG+ memory B cells that peaked 14–21 days after vaccination and averaged 1% of all B cells. Importantly, as much as 80% of ASCs purified at the peak of the response were influenza specific. This ASC response was characterized by a highly restricted B-cell receptor (BCR) repertoire that in some donors was dominated by only a few B-cell clones. This pauci-clonal response, however, showed extensive intraclonal diversification from accumulated somatic mutations. We used the immunoglobulin variable regions isolated from sorted single ASCs to produce over 50 human monoclonal antibodies (mAbs) that bound to the three influenza vaccine strains with high affinity. This strategy demonstrates that we can generate multiple high-affinity mAbs from humans within a month after vaccination. The panel of influenza-virus-specific human mAbs allowed us to address the issue of original antigenic sin (OAS): the phenomenon where the induced antibody shows higher affinity to a previously encountered influenza virus strain compared with the virus strain present in the vaccine1. However, we found that most of the influenza-virus-specific mAbs showed the highest affinity for the current vaccine strain. Thus, OAS does not seem to be a common occurrence in normal, healthy adults receiving influenza vaccination.

Preventing flu fatalities by stopping immune system overreaction

With the receptor identified, a therapy can be developed that will bind to the receptor, preventing the deadly immune response. Also, by targeting a receptor in humans rather than a particular strain of flu, therapies developed to exploit this discovery would work regardless of the rapid mutations that beguile flu vaccine producers every year.

This discovery could lead to treatments which turn off the inflammation in the lungs caused by influenza and other infections, according to a study published today in the journal Nature Immunology. The virus is often cleared from the body by the time symptoms appear and yet symptoms can last for many days, because the immune system continues to fight the damaged lung. The immune system is essential for clearing the virus, but it can damage the body when it overreacts if it is not quickly contained.

The immune overreaction accounts for the high percentage of young, healthy people who died in the vicious 1918 flu pandemic. While the flu usually kills the very young or the sickly and old, the pandemic flu provoked healthy people’s stronger immune systems to react even more profoundly than usual, exacerbating the symptoms and ultimately causing between 50 and 100 million deaths world wide. These figures from the past make the new discovery that much more important, as new therapies based on this research could prevent a future H5N1 bird flu pandemic from turning into a repeat of the 1918 Spanish flu.

In the new study, the researchers gave mice infected with influenza a mimic of CD200, or an antibody to stimulate CD200R, to see if these would enable CD200R to bring the immune system under control and reduce inflammation.

The mice that received treatment had less weight loss than control mice and less inflammation in their airways and lung tissue. The influenza virus was still cleared from the lungs within seven days and so this strategy did not appear to affect the immune system’s ability to fight the virus itself.

The researchers hope that in the event of a flu pandemic, such as a pandemic of H5N1 avian flu that had mutated to be transmissible between humans, the new treatment would add to the current arsenal of anti-viral medications and vaccines. One key advantage of this type of therapy is that it would be effective even if the flu virus mutated, because it targets the body’s overreaction to the virus rather than the virus itself.

In addition to the possible applications for treating influenza, the researchers also hope their findings could lead to new treatments for other conditions where excessive immunity can be a problem, including other infectious diseases, autoimmune diseases and allergy.

Time for a Bigger Machine!

We are currently hosting lifeboat.com on free web space provided by rubyredlabs.com. Due to the growth in our traffic plus more general activity on this server, it would be best if we had our own server.

Note that we have additional space from KurzweilAI.net on a shared server (shared with many domains) but the shared server is always rather loaded since it has so many domains on it so we don’t host our main pages on it. (We use the shared server for backups, file transfers, and less important domains.)

Our current solution is to stay with the same provider as rubyredlabs.com but to move to our own machine. (This should simplify the transition.) The current provider is theplanet.com. We plan on getting: Intel Xeon 3210 Quad Core Kentsfield Processor, 250GB HDD, 4GB RAM, 2500GB bandwidth, 10 IPs, 100mbps uplink — $199 monthly / $25 setup.

We welcome any feedback. We are currently on a system equal to: Celeron 2.0, 80GB HDD, 1GB RAM, 750GB bandwidth, 5 IPs, 10mbps uplink — $89 monthly / $0 setup but don’t have access to all the systems resources. We just finished completing an upgrade so both our blog pages and regular web pages are cached, enabling us to handle a lot of traffic but it doesn’t help much if the server has activity on it besides ours which is not cached… We also upgraded our spam filter so it runs about one thousand times as fast which was rather helpful.

Our traffic is around 150GB but obviously if it surged a lot it is easier to pay for more bandwidth than to get a new machine on the spot. Also our current machine is running FreeBSD — we plan on getting Red Hat 5 since we have a choice.

We welcome your input on this!

UPDATE: Jaan Tallinn, cofounder of Skype, the only major IM client that both securely authenticates conversation. participants, and encrypts the communication, gets us started with a $300 donation. Our goal is to raise $2,500 to pay for a faster machine for a year.

UPDATE II: Chris Haley agrees to become System Administrator for our new machine. He also donates $1,500 bringing our Bigger Machine Fund to $1,925. Only $575 to go!

SUCCESS! We were able to quickly raise $3,400 for our Bigger Machine Fund — far exceeding our goal of $2,500. Additional funds raised will be used to pay for additional months, for improved network/software/hardware security, and for a backup plan. Long-term, we plan on hosting our site with more than one provider for the ultimate in backup plans. The more you donate, the more infrastructure we will implement.

$153 million/city thin film plastic domes can protect against nuclear weapons and bad weather

Cross posted from Nextbigfuture

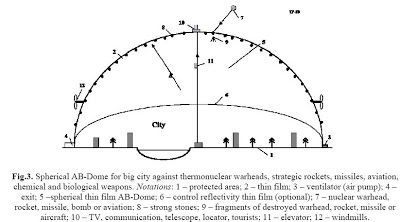

Now Alexander Bolonkin has come up with a cheaper, technological easy and more practical approach with thin film inflatable domes. It not only would provide protection form nuclear devices it could be used to place high communication devices, windmill power and a lot of other money generating uses. The film mass covered of 1 km**2 of ground area is M1 = 2×10**6 mc = 600 tons/km**2 and film cost is $60,000/km**2.

The area of big city diameter 20 km is 314 km**2. Area of semi-spherical dome is 628 km2. The cost of Dome cover is 62.8 millions $US. We can take less the overpressure (p = 0.001atm) and decrease the cover cost in 5 – 7 times. The total cost of installation is about 30–90 million $US. Not only is it only about $153 million to protect a city it is cheaper than a geosynchronous satellite for high speed communications. Alexander Bolonkin’s website

The author suggests a cheap closed AB-Dome which protects the densely populated cities from nuclear, chemical, biological weapon (bombs) delivered by warheads, strategic missiles, rockets, and various incarnations of aviation technology. The offered AB-Dome is also very useful in peacetime because it shields a city from exterior weather and creates a fine climate within the ABDome. The hemispherical AB-Dome is the inflatable, thin transparent film, located at altitude up to as much as 15 km, which converts the city into a closed-loop system. The film may be armored the stones which destroy the rockets and nuclear warhead. AB-Dome protects the city in case the World nuclear war and total poisoning the Earth’s atmosphere by radioactive fallout (gases and dust). Construction of the AB-Dome is easy; the enclosure’s film is spread upon the ground, the air pump is turned on, and the cover rises to its planned altitude and supported by a small air overpressure. The offered method is cheaper by thousand times than protection of city by current antirocket systems. The AB-Dome may be also used (height up to 15 and more kilometers) for TV, communication, telescope, long distance location, tourism, high placed windmills (energy), illumination and entertainments. The author developed theory of AB-Dome, made estimation, computation and computed a typical project.

His idea is a thin dome covering a city with that is a very transparent film 2 (Fig.1). The film has thickness 0.05 – 0.3 mm. One is located at high altitude (5 — 20 km). The film is supported at this altitude by a small additional air pressure produced by ground ventilators. That is connected to Earth’s ground by managed cables 3. The film may have a controlled transparency option. The system can have the second lower film 6 with controlled reflectivity, a further option.

The offered protection defends in the following way. The smallest space warhead has a

minimum cross-section area 1 m2 and a huge speed 3 – 5 km/s. The warhead gets a blow and overload from film (mass about 0.5 kg). This overload is 500 – 1500g and destroys the warhead (see computation below). Warhead also gets an overpowering blow from 2 −5 (every mass is 0.5 — 1 kg) of the strong stones. Relative (about warhead) kinetic energy of every stone is about 8 millions of Joules! (It is in 2–3 more than energy of 1 kg explosive!). The film destroys the high speed warhead (aircraft, bomber, wing missile) especially if the film will be armored by stone.Our dome cover (film) has 2 layers: top transparant layer 2, located at a maximum altitude (up 5 −20 km), and lower transparant layer 4 having control reflectivity, located at altitude of 1–3 km (option). Upper transparant cover has thickness about 0.05 – 0.3 mm and supports the protection strong stones (rebbles) 8. The stones have a mass 0.2 – 1 kg and locate the step about 0.5 m.

If we want to control temperature in city, the top film must have some layers: transparant dielectric layer, conducting layer (about 1 — 3 microns), liquid crystal layer (about 10 — 100 microns), conducting layer (for example, SnO2), and transparant dielectric layer. Common thickness is 0.05 — 0.5 mm. Control voltage is 5 — 10 V. This film may be produced by industry relatively cheaply.

If some level of light control is needed materials can be incorporated to control transparency. Also, some transparent solar cells can be used to gather wide area solar power.

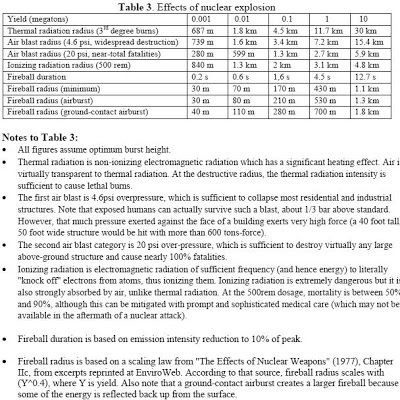

As you see the 10 kt bomb exploded at altitude 10 km decreases the air blast effect about in 1000

times and thermal radiation effect without the second cover film in 500 times, with the second reflected film about 5000 times. The hydrogen 100kt bomb exploded at altitude 10 km decreases the air blast effect about in 10 times and thermal radiation effect without the second cover film in 20 times, with the second reflected film about 200 times. Only power 1000kt thermonuclear (hydrogen) bomb can damage city. But this damage will be in 10 times less from air blast and in 10 times less from thermal radiation. If the film located at altitude 15 km, the

damage will be in 85 times less from the air blast and in 65 times less from the thermal radiation.

For protection from super thermonuclear (hydrogen) bomb we need in higher dome altitudes (20−30 km and more). We can cover by AB-Dome the important large region and full country.

Because the Dome is light weight it could be to stay in place even with very large holes. Multiple shells of domes could still be made for more protection.

Better climate inside a dome can make for more productive farming.

AB-Dome is cheaper in hundreds times then current anti-rocket systems.

2. AB-Dome does not need in high technology and can build by poor country.

3. It is easy for building.

4. Dome is used in peacetime; it creates the fine climate (weather) into Dome.

5. AB-Dome protects from nuclear, chemical, biological weapon.

6. Dome produces the autonomous existence of the city population after total World nuclear war

and total confinement (infection) all planet and its atmosphere.

7. Dome may be used for high region TV, for communication, for long distance locator, for

astronomy (telescope).

8. Dome may be used for high altitude tourism.

9. Dome may be used for the high altitude windmills (getting of cheap renewable wind energy).

10. Dome may be used for a night illumination and entertainment

Disruptions from small recessions to extinctions

Cross posted from Next big future by Brian Wang, Lifeboat foundation director of Research

I am presenting disruption events for humans and also for biospheres and planets and where I can correlating them with historical frequency and scale.

There has been previous work on categorizing and classifying extinction events. There is Bostroms paper and there is also the work by Jamais Cascio and Michael Anissimov on classification and identifying risks (presented below).

A recent article discusses the inevtiable “end of societies” (it refers to civilizations but it seems to be referring more to things like the end of the roman empire, which still ends up later with Italy, Austria Hungary etc… emerging)

The theories around complexity seem me that to be that core developments along connected S curves of technology and societal processes cap out (around key areas of energy, transportation, governing efficiency, agriculture, production) and then a society falls back (soft or hard dark age, reconstitutes and starts back up again).

Here is a wider range of disruption. Which can also be correlated to frequency that they have occurred historically.

High growth drop to Low growth (short business cycles, every few years)

Recession (soft or deep) Every five to fifteen years.

Depressions (50−100 years, can be more frequent)

List of recessions for the USA (includes depressions)

Differences recession/depression

Good rule of thumb for determining the difference between a recession and a depression is to look at the changes in GNP. A depression is any economic downturn where real GDP declines by more than 10 percent. A recession is an economic downturn that is less severe. By this yardstick, the last depression in the United States was from May 1937 to June 1938, where real GDP declined by 18.2 percent. Great Depression of the 1930s can be seen as two separate events: an incredibly severe depression lasting from August 1929 to March 1933 where real GDP declined by almost 33 percent, a period of recovery, then another less severe depression of 1937–38. (Depressions every 50–100 years. Were more frequent in the past).

Dark age (period of societal collapse, soft/light or regular)

I would say the difference between a long recession and a dark age has to do with breakdown of societal order and some level of population decline / dieback, loss of knowledge/education breakdown. (Once per thousand years.)

I would say that a soft dark age is also something like what China had from the 1400’s to 1970.

Basically a series of really bad societal choices. Maybe something between depressions and dark age or something that does not categorize as neatly but an underperformance by twenty times versus competing groups. Perhaps there should be some kind of societal disorder, levels and categories of major society wide screw ups — historic level mistakes. The Chinese experience I think was triggered by the renunciation of the ocean going fleet, outside ideas and tech, and a lot of other follow on screw ups.

Plagues played a part in weakening the Roman and Han empires.

Societal collapse talk which includes Toynbee analysis.

Toynbee argues that the breakdown of civilizations is not caused by loss of control over the environment, over the human environment, or attacks from outside. Rather, it comes from the deterioration of the “Creative Minority,” which eventually ceases to be creative and degenerates into merely a “Dominant Minority” (who forces the majority to obey without meriting obedience). He argues that creative minorities deteriorate due to a worship of their “former self,” by which they become prideful, and fail to adequately address the next challenge they face.

My take is that the Enlightenment would strengthened with a larger creative majority, where everyone has a stake and capability to creatively advance society. I have an article about who the elite are now.

Many now argue about how dark the dark ages were not as completely bad as commonly believed.

The dark ages is also called the Middle Ages

Population during the middle ages

Between dark age/social collapse and extinction. There are levels of decimation/devastation. (use orders of magnitude 90+%, 99%, 99.9%, 99.99%)

Level 1 decimation = 90% population loss

Level 2 decimation = 99% population loss

Level 3 decimation = 99.9% population loss

Level 9 population loss (would pretty much be extinction for current human civilization). Only 6–7 people left or less which would not be a viable population.

Can be regional or global, some number of species (for decimation)

Categorizations of Extinctions, end of world categories

Can be regional or global, some number of species (for extinctions)

== The Mass extinction events have occurred in the past (to other species. For each species there can only be one extinction event). Dinosaurs, and many others.

Unfortunately Michael’s accelerating future blog is having some issues so here is a cached link.

Michael was identifying manmade risks

The Easier-to-Explain Existential Risks (remember an existential risk

is something that can set humanity way back, not necessarily killing

everyone):

1. neoviruses

2. neobacteria

3. cybernetic biota

4. Drexlerian nanoweapons

The hardest to explain is probably #4. My proposal here is that, if

someone has never heard of the concept of existential risk, it’s

easier to focus on these first four before even daring to mention the

latter ones. But here they are anyway:

5. runaway self-replicating machines (“grey goo” not recommended

because this is too narrow of a term)

6. destructive takeoff initiated by intelligence-amplified human

7. destructive takeoff initiated by mind upload

8. destructive takeoff initiated by artificial intelligence

Another classification scheme: the eschatological taxonomy by Jamais

Cascio on Open the Future. His classification scheme has seven

categories, one with two sub-categories. These are:

0:Regional Catastrophe (examples: moderate-case global warming,

minor asteroid impact, local thermonuclear war)

1: Human Die-Back (examples: extreme-case global warming,

moderate asteroid impact, global thermonuclear war)

2: Civilization Extinction (examples: worst-case global warming,

significant asteroid impact, early-era molecular nanotech warfare)

3a: Human Extinction-Engineered (examples: targeted nano-plague,

engineered sterility absent radical life extension)

3b: Human Extinction-Natural (examples: major asteroid impact,

methane clathrates melt)

4: Biosphere Extinction (examples: massive asteroid impact,

“iceball Earth” reemergence, late-era molecular nanotech warfare)

5: Planetary Extinction (examples: dwarf-planet-scale asteroid

impact, nearby gamma-ray burst)

X: Planetary Elimination (example: post-Singularity beings

disassemble planet to make computronium)

A couple of interesting posts about historical threats to civilization and life by Howard Bloom.

Natural climate shifts and from space (not asteroids but interstellar gases).

Humans are not the most successful life, bacteria is the most successful. Bacteria has survived for 3.85 billion years. Humans for 100,000 years. All other kinds of life lasted no more than 160 million years. [Other species have only managed to hang in there for anywhere from 1.6 million years to 160 million. We humans are one of the shortest-lived natural experiments around. We’ve been here in one form or another for a paltry two and a half million years.] If your numbers are not big enough and you are not diverse enough then something in nature eventually wipes you out.

Following the bacteria survival model could mean using transhumanism as a survival strategy. Creating more diversity to allow for better survival. Humans adapted to living under the sea, deep in the earth, in various niches in space, more radiation resistance,non-biological forms etc… It would also mean spreading into space (panspermia). Individually using technology we could become very successful at life extension, but it will take more than that for a good plan for human (civilization, society, species) long term survival planning.

Other periodic challenges:

142 mass extinctions, 80 glaciations in the last two million years, a planet that may have once been a frozen iceball, and a klatch of global warmings in which the temperature has soared by 18 degrees in ten years or less.

In the last 120,000 years there were 20 interludes in which the temperature of the planet shot up 10 to 18 degrees within a decade. Until just 10,000 years ago, the Gulf Stream shifted its route every 1,500 years or so. This would melt mega-islands of ice, put out our coastal cities beneath the surface of the sea, and strip our farmlands of the conditions they need to produce the food that feeds us.

The solar system has a 240-million-year-long-orbit around the center of our galaxy, an orbit that takes us through interstellar gas clusters called local fluff, interstellar clusters that strip our planet of its protective heliosphere, interstellar clusters that bombard the earth with cosmic radiation and interstellar clusters that trigger giant climate change.

Spending Effectively

Last year, the Singularity Institute raised over $500,000. The World Transhumanist Association raised $50,000. The Lifeboat Foundation set a new record for the single largest donation. The Center for Responsible Nanotechnology’s finances are combined with those of World Care, a related organization, so the public can’t get precise figures. But overall, it’s safe to say, we’ve been doing fairly well. Most not-for-profit organizations aren’t funded adequately; it’s rare for charities, even internationally famous ones, to have a large full-time staff, a physical headquarters, etc.

The important question is, now that we’ve accumulated all of this money, what are we going to spend it on? It’s possible, theoretically, to put it all into Treasury bonds and forget about it for thirty years, but that would be an enormous waste of expected utility. In technology development, the earlier the money is spent (in general), the larger the effect will be. Spending $1M on a technology in the formative stages has a huge impact, probably doubling the overall budget or more. Spending $1M on a technology in the mature stages won’t even be noticed. We have plenty of case studies: Radios. TVs. Computers. Internet. Telephones. Cars. Startups.

The opposite danger is overfunding the project, commonly called “throwing money at the problem”. Hiring a lot of new people without thinking about how they will help is one common symptom. Having bloated layers of middle management is another. To an outside observer, it probably seems like we’re reaching this stage already. Hiring a Vice President In Charge Of Being In Charge doesn’t just waste money; it causes the entire organization to lose focus and distracts everyone from the ultimate goal.

I would suggest a top-down approach: start with the goal, figure out what you need, and get it. The opposite approach is to look for things that might be useful, get them, then see how you can complete a project with the stuff you’ve acquired. NASA is an interesting case study, as they followed the first strategy for a number of years, then switched to the second one.

The second strategy is useful at times, particularly when the goal is constantly changing. Paul Graham suggests using it as a strategy for personal success, because the ‘goal’ is changing too rapidly for any fixed plan to remain viable. “Personal success” in 2000 is very different from “success” in 1980, which was different from “success” in 1960. If Kurzweil’s graphs are accurate, “success” in 2040 will be so alien that we won’t even be able to recognize it.

But when the goal is clear- save the Universe, create an eternal utopia, develop new technology X- you simply need to smash through whatever problems show up. Apparently, money has been the main blocker for some time, and it looks like we’ve overcome that (in the short-term) through large-scale fundraising. There’s a large body of literature out there on how to deal with organizational problems; thousands of people have done this stuff before. I don’t know what the main blocker is now, but odds are it’s in there somewhere.

Cheap (tens of dollars) genetic lab on a chip systems could help with pandemic control

Cross posted from Next big future

Since a journal article was submitted to the Royal Society of Chemistry, the U of Alberta researchers have already made the processor and unit smaller and have brought the cost of building a portable unit for genetic testing down to about $100 Cdn. In addition, these systems are also portable and even faster (they take only minutes). Backhouse, Elliott and McMullin are now demonstrating prototypes of a USB key-like system that may ultimately be as inexpensive as standard USB memory keys that are in common use – only tens of dollars. It can help with pandemic control and detecting and control tainted water supplies.

This development fits in with my belief that there should be widespread inexpensive blood, biomarker and genetic tests to help catch disease early and to develop an understanding of biomarker changes to track disease and aging development. We can also create adaptive clinical trials to shorten the development and approval process for new medical procedures

The device is now much smaller than size of a shoe-box (USB stick size) with the optics and supporting electronics filling the space around the microchip

Canadian scientists have succeeded in building the least expensive portable device for rapid genetic testing ever made. The cost of carrying out a single genetic test currently varies from hundreds to thousands of pounds, and the wait for results can take weeks. Now a group led by Christopher Backhouse, University of Alberta, Edmonton, have developed a reusable microchip-based system that costs just 500 (pounds) to build, is small enough to be portable, and can be used for point-of-care medical testing.

To keep costs down, ‘instead of using the very expensive confocal optics systems currently used in these types of devices we used a consumer-grade digital camera’, Backhouse explained.

The device can be adapted for used in many different genetic tests. ‘By making small changes to the system you could test for a person’s predisposition to cancer, carry out pharmacogenetic tests for adverse drug reactions or even test for pathogens in a water supply,’ said Backhouse.

The heart of the unit, the ‘chip,’ looks like a standard microscope slide etched with fine silver and gold lines. That microfabricated chip applies nano-biotechnologies within tiny volumes, sometimes working with only a few molecules of sample. Because of this highly integrated chip (containing microfluidics and microscale devices), the remainder of the system is inexpensive ($1,000) and fast.

There are many possible uses for such a portable genetic testing unit:

Backhouse notes that adverse drug reactions are a major problem in health care. By running a quick genetic test on a cancer patient, for example, doctors might pinpoint the type of cancer and determine the best drug and correct dosage for the individual.

Or health-care professionals can easily look for the genetic signature for a virus or E. coli – also making it useful for testing water quality.

“From a public health point of view, it would be wonderful during an epidemic to be able to do a quick test on a patient when they walk into an emergency room and be able to say, ‘you have SARS, you need to go into that (isolation) room immediately.’ ”

A family doctor might determine a person’s genetic predisposition to an illness during an office visit and advise the patient on preventative lifestyle changes.

FURTHER READING

Microfabrication technologies research at the University of Alberta

In collaboration with the Glerum Lab we have been developing microchip based implementations of genetic amplification (PCR — the polymerase chain reaction) and capillary electrophoresis (CE) that are extremely fast.

On the brink of Synthetic Life: DNA synthesis has increased twenty times to full bacteria size

Reposted from Next Big Future which was advancednano.

A 582,970 base pair sequence of DNA has been synthesized.

It’s the first time a genome the size of a bacterium has chemically been synthesized that’s about 20 times longer than [any DNA molecule] synthesized before.

This is a huge increase in capability. It has broad implications for DNA nanotechnology and synthetic biology.

It is particularly relevant for the lifeboat foundation bioshield project

This means that the Venter Institute is on the brink of sythesizing a new bacterial life.

The process to synthesize and assemble the synthetic version of the M. genitalium chromosome

began first by resequencing the native M. genitalium genome to ensure that the team was starting with an error free sequence. After obtaining this correct version of the native genome, the team specially designed fragments of chemically synthesized DNA to build 101 “cassettes” of 5,000 to 7,000 base pairs of genetic code. As a measure to differentiate the synthetic genome versus the native genome, the team created “watermarks” in the synthetic genome. These are short inserted or substituted sequences that encode information not typically found in nature. Other changes the team made to the synthetic genome included disrupting a gene to block infectivity. To obtain the cassettes the JCVI team worked primarily with the DNA synthesis company Blue Heron Technology, as well as DNA 2.0 and GENEART.

From here, the team devised a five stage assembly process where the cassettes were joined together in subassemblies to make larger and larger pieces that would eventually be combined to build the whole synthetic M. genitalium genome. In the first step, sets of four cassettes were joined to create 25 subassemblies, each about 24,000 base pairs (24kb). These 24kb fragments were cloned into the bacterium Escherichia coli to produce sufficient DNA for the next steps, and for DNA sequence validation.

The next step involved combining three 24kb fragments together to create 8 assembled blocks, each about 72,000 base pairs. These 1/8th fragments of the whole genome were again cloned into E. coli for DNA production and DNA sequencing. Step three involved combining two 1/8th fragments together to produce large fragments approximately 144,000 base pairs or 1/4th of the whole genome.

At this stage the team could not obtain half genome clones in E. coli, so the team experimented with yeast and found that it tolerated the large foreign DNA molecules well, and that they were able to assemble the fragments together by homologous recombination. This process was used to assemble the last cassettes, from 1/4 genome fragments to the final genome of more than 580,000 base pairs. The final chromosome was again sequenced in order to validate the complete accurate chemical structure.

The synthetic M. genitalium has a molecular weight of 360,110 kilodaltons (kDa). Printed in 10 point font, the letters of the M. genitalium JCVI-1.0 genome span 147 pages.