By Eliott Edge

“It is possible for a computer to become conscious. Basically, we are that. We are data, computation, memory. So we are conscious computers in a sense.”

—Tom Campbell, NASA

If the universe is a computer simulation, virtual reality, or video game, then a few unusual conditions seem to necessarily fall out from that reading. One is what we call consciousness, the mind, is actually something like an artificial intelligence. If the universe is a computer simulation, we are all likely one form of AI or another. In fact, we might come from the same computer that is creating this simulated universe to begin with. If so then it stands to reason that we are virtual characters and virtual minds in a virtual universe.

In Breaking into the Simulated Universe, I discussed how if our universe is a computer simulation, then our brain is just a virtual brain. It is our avatar’s brain—but our avatar isn’t really real. It is only ever real enough. Our virtual brain plays an important part in making the overall simulation appear real. The whole point of the simulation is to seem real, feel real, look real—this includes rendering virtual brains. In Breaking I went into this “virtual brain” conundrum, including how the motor-effects of brain damage work in a VR universe. The virtual brain concept seems to apply to many variants of the “universe is a simulation” proposal. But if the physical universe and our physical brain amount to just fancy window-dressing, and the bigger picture is indeed that we are in a simulated universe, then our minds are likely part of the big supercomputer that crunches out this mock universe. That is the larger issue. If the universe is a VR, then it seems to necessarily mean that human minds already are an artificial intelligence. Specifically, we are an artificial intelligence using a virtual lifeform avatar to navigate through an evolving simulated physical universe.

About the AI

There are several flavors of the simulation hypothesis and digital mechanics out there in science and philosophy; I refer to these different schools of thought with the umbrella term simulism.

In Breaking I went over the connection between Edward Fredkin’s concept of Other—the ‘other place,’ the computer platform, where our universe is being generated from—and Tom Campbell’s concept of Consciousness as an ever-evolving AI ruleset. If you take these two ideas and run with them, what you end up with is an interesting inevitability: over enough time and enough evolutionary pressure, an AI supercomputer with enough resources should be pushed to crunch out any number of virtual universes and any number of conscious AI lifeforms. The big evolving AI supercomputer would be the origin of both physical reality and conscious life. And it would have evolved to be that way.

Why the supercomputer AI makes mock universes and AI lifeforms is to forward its own information evolution, while at the same time avoiding a kind of “death” brought on by chaos, high entropy (disorganization), and noise winning over signal, over order. To Campbell, this is a form of evolution accomplished by interaction. It would mean not only is our whole universe really a highly detailed version of The Sims. It would mean it actually evolved to be this way from a ruleset—a ruleset with the specific purpose of further evolving the overall big supercomputer and the virtual lifeforms within it. The players, the game, and the big supercomputer crunching it all out evolve and develop as one.

Maybe this is the way it is, maybe not. Nevertheless, if it turns out our universe is some kind of computed virtual reality simulation, all conscious life will likely end up being cast as AI. This makes the situation interesting when imagining what role free will might play.

Free will

If we are an AI then what about free will? Perhaps some of us virtual critters live without free will. Maybe there are philosophical zombies and non-playable characters amongst us—lifeforms that only seem to be conscious but actually aren’t. Maybe we already are zombies, and free will is an illusion. It should be noted that simulist frameworks do not all necessarily wipeout decision-making and free will. Campbell in particular argues that free will is fundamental to the supercomputing virtual reality learning machine. It uses free will and the virtual lifeforms’ interactions to learn and evolve by using the tool of decision-making. The feedback from those decisions drives evolution. In Campbell’s model, evolution is actually impossible without free will. Nevertheless, whether or not free will is real, or some have free will and others only appear to have it, let us reflect on our own experience of decision-making.

What is it like to make a choice? We do not seem to be merely linear, route machines in our thinking and decision-making processes. It is not that we undergo x-stimulus and then always deliver a single, given, preloaded y-response every single time. We appear to think and consider. Our conclusions vary. We experience fuzzy logic. Our feelings play a role. We are apparently subject to a whole array of possible responses. And of course even non-responses, like choosing not to choose, are also responses. Perhaps even all this is just an illusion.

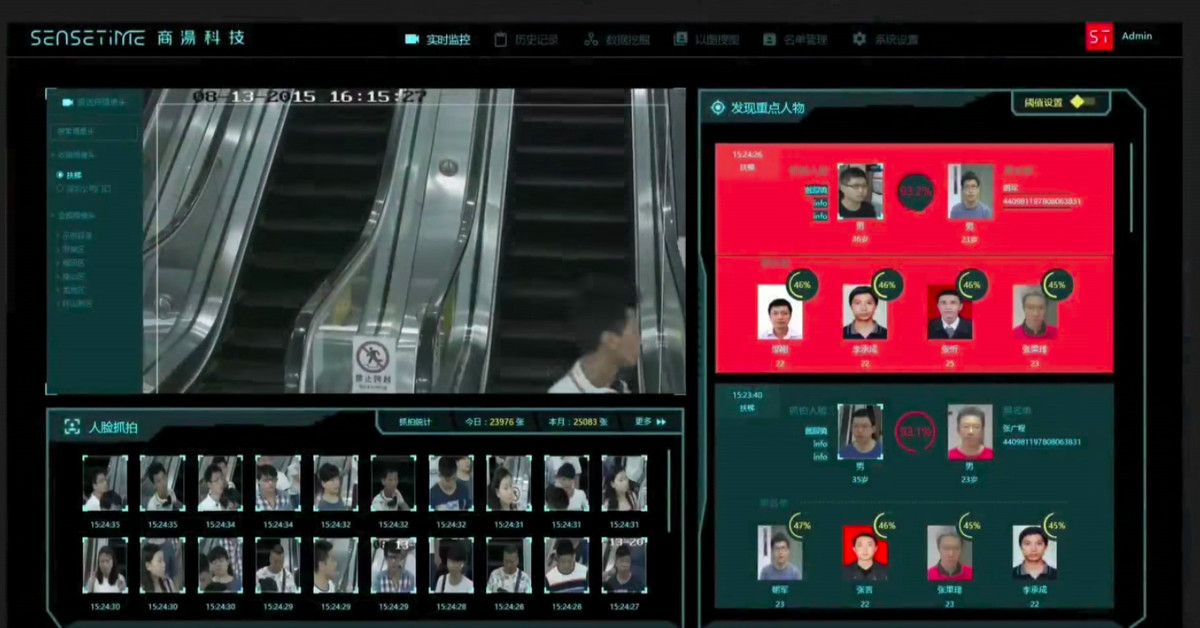

The question of free will might be difficult or impossible to answer. However, it does bring up a larger issue that seems to influence free will: programming. Whether we are free, “free enough,” or total zombies, an interesting question seems to almost always ride alongside the issue of choice and volition—it must be asked, what role does programming play? To begin this line of inquiry, we must first admit just how programmable we always already are.

Programming

Our whole biology is the result of pressure and programming. Tabula rasa, the idea that we are born as a “blank slate,” was chucked out long ago. We now know we arrive preprogrammed by millennia. There is barely but a membrane between our programming and what we call (or assume to be) our conscious waking selves. This is dramatically explored in the 2016 series Westworld. Without much for spoilers, the story’s “hosts” are artificially intelligent robots that are trapped in programmed “loops,” repetitive cycles of thought and behavior. Regarding these loops, the hosts’ creator Dr. Ford (Anthony Hopkins) states, “Humans fancy that there’s something special about the way we perceive the world, and yet we live in loops as tight and as closed as the hosts do. Seldom questioning our choices. Content, for the most part, to be told what to do next.”

The programmability of biology and conscious life is already without question. We are manifestations of a complex blueprint called DNA—a set of instructions programmed by our environment interacting with our biology and genetics. Our diets, interests, how much sunlight we get a day, and even our stresses, feelings, and thoughts all have a measurable effect on our DNA. Our body is the living receipt of what is etched and programmed into our DNA.

DNA is made up of information and instructions. This information has been programmed by a variety of other types of environmental, physiological, and psychic information over vast eons of time. We grow gills due to the presence of water, or lungs due to the presence of air. Sometimes we grow four stomachs. Sometimes we grow ears so sensitive that can see mass in the dark. The world talks to us, and so we change ourselves based on what we are able to pick up. Reality informs us, and we mutate accordingly. If the universe is a computer program then so too are we programmed by it. The VR environment program also programs the conscious AIs living in it.

In part, our social environment programs our psychologies. Our families, languages, neighborhoods, cultures, religions, ideologies, expectations, fears, addictions, rewards, needs, slogans—these are all largely programmed into us as well. They define and shape our individual and collective personhood. And they all program our view of the world, and our selves within it. Our information exchange through socialization programs us.

Ultimately, programming is instruction. But human beings often experience conflicting sets of instructions simultaneously. One of Sigmund Freud’s great contributions was his identification of “das unbehagen.” Unbehagen refers to the uneasiness we feel as our instincts (one set of instructions) come into conflict with our culture, society, values, and civilization (another set of instructions). We choose not to cheat on our partner with someone wildly attractive, even though we might really want to. We don’t attack someone even though they might sorely deserve it. The fallout of this behavior is potentially just too great to follow through with. If left unprocessed we develop neuroses, obsessions, and pathologies inside of us that are beyond our conscious control. “Demons” and “hungry ghosts” guide us to behaviors, thoughts, and states of being that are so upsetting to our waking conscious selves that we tend to describe them as unwanted, alien, or even as sin. They create a sense of feeling “out of control.” Indeed, conflicting instructions, conflicting thoughts, behaviors, and goals are causes of great suffering for many people. We develop illnesses of the body and mind, and then pass those smoldering genes—that malignant programming—onto the next generation. Here we have biological programming working against social programming, physiological instructions conflicting with societal instructions. Now just imagine an AI robot trying to compute two or three contradictory programs simultaneously. You would see an android throwing a fit, breaking down, shutting off, and hopefully eventually attempting to put itself back together.

In terms of conflicting programming, an interesting aside can be found in comedy. Humor strikes often in the form of contradiction, as in Shakespeare’s Hamlet. Polonius famously claims that, “brevity is the soul of wit,” yet he is ironically verbose—naturally implying that he is witless. In this case we have contradiction—does not compute. But not all humor is contradiction. Consider the joke, “Can a kangaroo jump higher than a house?” The punchline is, “Of course they can. Houses don’t jump at all.” This joke does not translate to does not compute; instead this joke computes all too well. In many instances, this is humor: it either doesn’t make sense or, it makes more sense than you ever expected. It is information brought into a new light—information recontextualized.

A final novel consideration to this idea of programming can be found in the phenomenon of ‘positive sexual imprinting.’ The habit human beings exhibit in determining sexual or romantic partners has long fascinated psychologists—they are often based on similarities to their parents and caregivers. To our species-wide relief, this behavior is not exclusive to human beings. Mammals, birds, and even fish have been documented pairing up with mates that resemble their forbearers. Even goats that are raised by sheep will grow up to pursue sheep, and visa versa. Here is another example of programming that works often just under our awareness, and yet it has a titanic, indeed central, effect on our lives. Choosing mates and partners, especially for long-term relationships or even procreation, is one of the circumstances that most dramatically guide our livelihood and our personal destiny. This is the depth of programming.

It was Freud who pointed out in so many words, your mind is not your own.

Goals and Rewards

Human beings love instruction. Recollect Dr. Ford’s remark from the previous section, “[Humans are] content, for the most part, to be told what to do next.” Chemically speaking, our rewards arrive through serotonin, dopamine, oxytocin, and endorphins. In waking life we experience them during events like social bonding, and poignant experiences; we feel it alongside with a sense of profound meaning and pleasure, and these experiences and chemicals even go on to help shape our values, goals, and lives. These complex chemical exchanges shoot through human beings particularly when we receive instructions and also when we accomplish goals.

We find it particularly rewarding when we happily do something for someone we love or admire. We are fond of all kinds of games and game playing. We enjoy drama and rewards. Acting within rules and roles, as well as bending or breaking them, is a moment-to-moment occupation for all human beings.

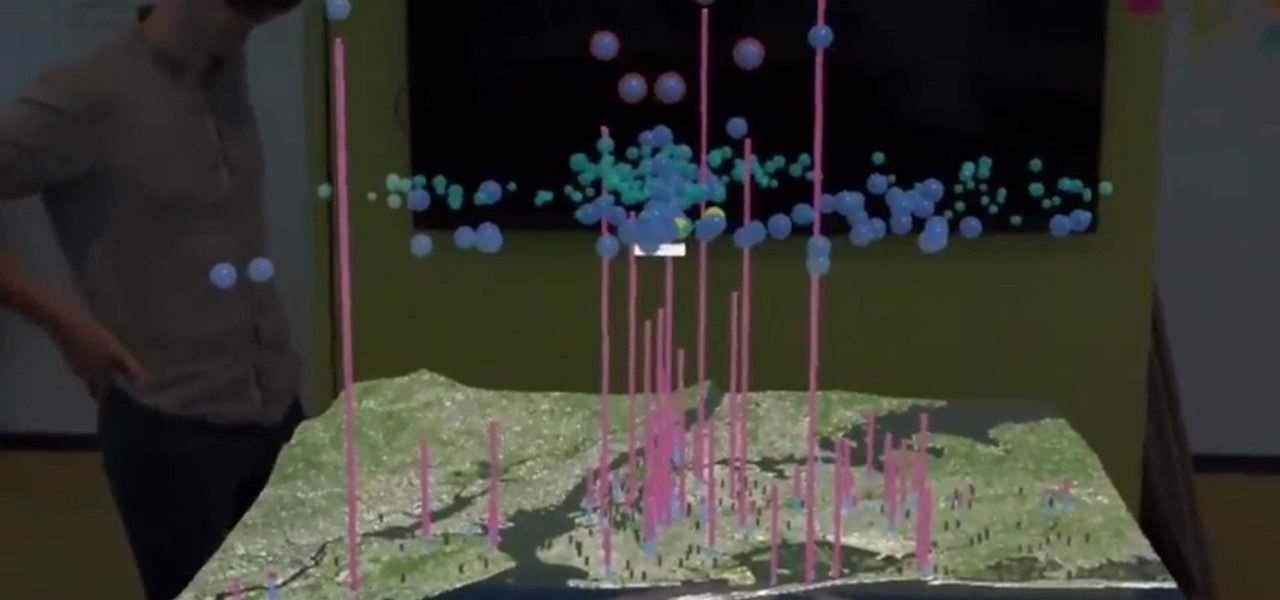

We also design goals that can only come into fruition years, sometimes decades, into the future. We then program and modify our being and circumstance to bring these goals into an eventual present; we change based on what we want. We feel meaning and purpose when we have a goal. We experience joy and fulfillment when that goal is achieved. Without a series of goals we become quite genuinely paralyzed. Even the movement of a limb from position A to position B is a goal. All motor functioning is goal-oriented. Turns out that the machine learning and AI that we are attempting to develop in laboratories today work particularly well when it is given goals and rewards.

In Daniel Dewey’s 2014 paper Reinforcement Learning and the Reward Engineering Principle, Dewey argued that adding rewards to machine learning actually encourages that system to produce useful and interesting behaviors. Google’s DeepMind research team has since developed an AI (which taught itself to walk in a VR environment), and subsequently published a paper in 2017 called A Distributional Perspective on Reinforcement Learning, apparently confirming this rewards-based approach.

Laurie Sullivan wrote a summary on Reinforcement Learning in a MediaPost article called Google: Deepmind AI Learns On Rewards System:

The system learns by trial and error and is motivated to get things correct based on rewards […]

The idea is that the algorithm learns, considers rewards based on its learning, and almost seems to eventually develop its own personality based on outside influences. In a new paper, DeepMind researchers show it is possible to model not only the average but also the reward as it changes. Researchers call this the “value distribution” or the distribution value of the report.

Rewards make reinforcement learning systems increasingly accurate and faster to train than previous models. More importantly, per researchers, it opens the possibility of rethinking the entire reinforcement learning process.

If human beings and our computer AIs both develop valuably through goals and rewards, then these sorts of drives might be fundamental to consciousness itself. If it is fundamental to consciousness itself, and our universe is a computer simulation, then goals and rewards likely guide or influence the big evolving supercomputer AI behind life and reality. If this is all true then there is a goal, there is a purpose embedded within the fabric of existence. Maybe there is even more than one.

Ontology and Meta-metaphors

In the essays Breaking into the simulated universe and, Why it matters that you realize you’re in a computer simulation, I asked, ‘what happens after we embrace our reality as a computer simulation?’ In a neighboring line of thinking, all simulists must equally ask, ‘what happens after we realize we are an artificial intelligence in a computer simulation?’

First of all, our whole instinctual drive to create our own computed artificial intelligence takes on a new light. We are building something like ourselves in the mirror of a would-be mentalizing machine. If this is true, then we are doing more than just recreating ourselves; we are recreating the larger reality, the larger context, that we are all a part of. Maybe making an AI is actually the most natural thing in the world, because, indeed, we already are AIs.

Second, we would have to accept that we not merely human. Part of us, an important part indeed, is locked in an experience of humanness no doubt. But, again, there is a deeper reality. If the universe is a computer simulation, then our consciousness is part of that computer, and our human bodies act as avatars. Although our situation of existing as ‘human beings’ may appear self-evident, it is this deeper notion that our consciousness is a partitioned segment of the larger evolving AI supercomputer that is responsible for both life and the universe, must be explored. We would do well to accept that as human beings we are, like any computer simulated situation, real enough—but that our human avatar is not the beginning of the end of our total consciousness. Our humanness is only the crust. If we are AIs that are being crunched out by the supercomputer responsible for our physical universe, then we might have a valuable new framework to investigate the mind, altered states, and consciousness exploration. After all, if we are part of the big supercomputer behind the universe, maybe we can interact with it and visa versa.

Third, if we are an artificial intelligence, we should examine the idea of programming intensely. Even without the virtual reality reading, we all are programed by the environment, programmed by our own volition, programmed by others, by millions of years of genetic trial and error, and we go on to program the environment, and the beings all around us as well. This is true. These programs and instructions create deep contexts, thought and behavior patterns. They generate loops that we easily pick up and fall into, often without second thought or even notice. We are already so entrenched. So, in terms of programming we would likely do well to accept this as an opportunity. Cognitive Behavioral Therapy, the growing field of psychedelic psychotherapy, and just good old fashion learning are powerful ways we can rewrite, edit, or straight-out delete code that is no longer desirable to us. It is also worth including the gene editing revolution that is upon us thanks to medical breakthroughs like CRISPR. If we accept we are an AI lifeform that has been programmed, perhaps that will put us in a more formidable position in managing and developing our own programs, instructions, rewards, and loops more consciously. To borrow the title of work by visual artist Dakota Crane—Machines, Take Up Thy Schematics and Self-Construct!

Finally, the AI metaphor might be able to help us extract ourselves out of contexts and ideas that have perhaps inadvertently limited us when we think of ourselves as strictly ‘human beings’ with ‘human brains.’ Metaphors though they may be: any concept that embraces our multidimensionality, as well as helps us get a better handle on the pressing matter of our shared existence, I deem good. Anything that narrows it—in the instance of say claiming that one is a ‘human being,’ which comes loaded with it very hard and fast assumptions and limits (either true or believed to be true)—I deem problematic. These claims are problematic because they create a context that is rarely based on truth, but based largely on convenience, habit, tradition, and belief. Simply put, claiming you are exclusively a ‘human being’ is necessarily limiting (“death,” “human nature,” etc.), whereas claiming that you are an AI means that there is a great-undiscovered country before you. For we do not know yet what it means to be an AI, while we do have a pretty fixed idea of what it means to be a human being. Nevertheless, ‘human being’ and ‘AI’ are both simply thought-based concepts. If ‘AI’ broadens our decision space more than ‘human being’ does, then AI may be a more valuable position to operate from.

Computers, robots, and AI are powerful new metaphors for understanding ourselves; because they are indeed that which is most like us. A computer is like a brain, a robot is like a brain walking around and dealing with it. Virtual reality is another metaphor—one capable of approaching everything from culture, to thought, to quantum mechanics. Much like the power and robustness of the idea of ‘virtual reality’ as a meta-metaphor and meta-context for dealing with a variety of experiences and domains, so too are the ideas of ‘programming’ and ‘artificial intelligence’ equally strong and potentially useful concepts for extracting ourselves out of the circumstances that we have, in large part, created for ourselves. However, regardless of how similar we are to computers, AIs, and robots, they are not quite us exactly. At the end of it all, terms like ‘virtual reality’ and ‘artificial intelligence’ are but metaphors. They are concepts alluding to something immensely peculiar that we detect existing—as Terence McKenna would likely describe it—just at the threshold of rational apprehension, and seemingly peeking out from hyperspace. If we are already an AI, then that is a frontier that sorely demands our exploration.

Originally published at The Institute of Ethics and Emerging Technologies