(This essay has been published by the Innovation Journalism Blog — here — Deutsche Welle Global Media Forum — here — and the EJC Magazine of the European Journalism Centre — here)

Thousands of lives were consumed by the November terror attacks in Mumbai.

“Wait a second”, you might be thinking. “The attacks were truly horrific, but all news reports say around two hundred people were killed by the terrorists, so thousands of lives were definitely not consumed.”

You are right. And you are wrong.

Indeed, around 200 people were murdered by the terrorists in an act of chilling exhibitionism. And still, thousands of lives were consumed. Imagine that a billion people devoted, on average, one hour of their attention to the Mumbai tragedy: following the news, thinking about it, discussing it with other people. The number is a wild guess, but the guess is far from a wild number. There are over a billion people in India alone. Many there spent whole days following the drama. One billion people times one hour is one billion hours, which is more than 100,000 years. The global average life expectancy is today 66 years. So nearly two thousand lives were consumed by news consumption. It’s far more than the number of people murdered, by any standards.

In a sense, the newscasters became unwilling bedfellows of the terrorists. One terrorist survived the attacks, confessing to the police that the original plan had been to top off the massacre by taking hostages and outlining demands in a series of dramatic calls to the media. The terrorists wanted attention. They wanted the newsgatherers to give it to them, and they got it. Their goal was not to kill a few hundred people. It was to scare billions, forcing people to change reasoning and behavior. The terrorists pitched their story by being extra brutal, providing news value. Their targets, among them luxury hotels frequented by the international business community, provided a set of target audiences for the message of their sick reality show. Several people in my professional surroundings canceled business trips to Mumbai after watching the news. The terrorists succeeded. We must count on more terror attacks on luxury hotels in the future.

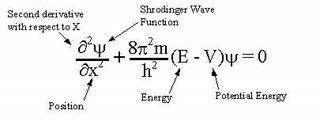

Can the journalists and news organizations who were in Mumbai be blamed for serving the interests of the terrorists? I think not. They were doing their jobs, reporting on the big scary event. The audience flocked to their stories. Their business model — generating and brokering attention — was exploited by the terrorists. The journalists were working on behalf of the audience, not on behalf of the terrorists. But that did not change the outcome. The victory of the terrorists grew with every eyeball that was attracted by the news. Without doubt, one of the victims was t he role of journalism as a non-involved observer. It got zapped by a paradox. It’s not the first time. Journalism always follows “the Copenhagen interpretation” of quantum mechanics: You can’t measure a system without influencing it.

he role of journalism as a non-involved observer. It got zapped by a paradox. It’s not the first time. Journalism always follows “the Copenhagen interpretation” of quantum mechanics: You can’t measure a system without influencing it.

Self reference is a classic dilemma for journalism. Journalism wants to observe, not be an actor. It wants to cover a story without becoming part of it. At the same time it aspires to empower the audience. But by empowering the audience, it becomes an actor on the story. Non-involvement won’t work, it is a self-referential paradox like the Epimenides paradox  (the prophet from Crete who said “All Cretans are liars”). The basic self-referential paradox is the liars’ paradox (“This sentence is false”). This can be a very constructive paradox, if taken by the horns. It inspired Kurt Gödel to reinvent the foundation of mathematics, addressing self-reference. Perhaps the principles of journalism can be reinvented, too? Perhaps the paradox of non-involvement can be replaced by ethics of engagement as practiced by, for example, psychologists and lawyers?

(the prophet from Crete who said “All Cretans are liars”). The basic self-referential paradox is the liars’ paradox (“This sentence is false”). This can be a very constructive paradox, if taken by the horns. It inspired Kurt Gödel to reinvent the foundation of mathematics, addressing self-reference. Perhaps the principles of journalism can be reinvented, too? Perhaps the paradox of non-involvement can be replaced by ethics of engagement as practiced by, for example, psychologists and lawyers?

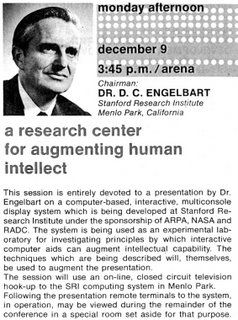

between people sitting behind computers linked by a network, “the mother of all demos”, when Doug Engelbart and his team at SRI demoed the first computer mouse, interactive text, video conferencing, teleconferencing, e-mail and hypertext.

between people sitting behind computers linked by a network, “the mother of all demos”, when Doug Engelbart and his team at SRI demoed the first computer mouse, interactive text, video conferencing, teleconferencing, e-mail and hypertext.

Only 40 years after their first demo, and only 15 years after the Internet reached beyond the walls of university campuses, Doug’s tools are in almost every home and office. Soon they’ll be built into every cell phone. We are always online. For the first time in human history, the attention of the whole world can soon be summoned simultaneously. If we summon all the attention the human species can supply, we can focus two hundred human years of attention onto a single issue in a single second. This attention comes equipped with glowing computing power that can process information in a big way.

Every human on the Net is using a computer device able to do millions or billions of operations per second. And more is to come. New computers are always more powerful than their predecessors. The power has doubled every two years since the birth of computers. This is known as Moore’s Law.

If the trend continues for another 40 years, people will be using computers one million times more powerful than today. Try imagining what you can do with that in your phone or hand-held gaming device! Internet bandwidth is also booming. Everybody on Earth will have at least one gadget. We will all be well connected. We will all be able to focus our attention, our ideas and our computational powers on the same thing at the same go. That’s pretty powerful. This is actually what Doug was facilitating when he dreamed up the Demo. The mouse — what Doug is famous for today — is only a detail. Doug says we can only solve the complex problems of today by summoning collective intelligence. Nuclear war, pandemics, global warming. These are all problems requiring collective intelligence. The key to collective intelligence is collective attention. The flow of attention controls how much of our collective intelligence gets allocated to different things.

When Doug Engelbart’s keynoted the Fourth Conference on Innovation Journalism he pointed out that journalism is the perception system of collective intelligence. He hit the nail on the head. When people share news, they have a story in common. This shapes a common picture of the world and a common set of narratives for discussing it. It is agenda setting (there is an established “agenda-setting theory” about this). Journalism is the leading mechanism for generating collective attention. Collective attention is needed for shaping a collective opinion. Collective intelligence might require a collective opinion in order to address collective issues.

There is an upside and a downside to everything. We can now summon collective attention to track the spread of diseases. But we are also more susceptible to fads, hypes and hysterias. Will our ability to focus collective attention improve our lives or will we become victims of collective neurosis?

We are moving into the attention economy. Information is no longer a scarce commodity. But attention is. Some business strategists think ‘attention transactions’ can replace financial transactions as the focus of our economy. In this sense, the effects on society of collective attention is the macroeconomics of the attention economy. Collective attention is key for exercising collective intelligence. Journalism — the professional generator and broker of collective attention — is a key factor.

This brings us back to Mumbai. How collectively intelligent was it to spend thousands of human lifetimes of attention following the slaughter of hundreds? The jury is out on that one — it depends on the outcome of our attention. Did the collective attention benefit the terrorists? Yes, at least in the short term. Perhaps even in the long term. Did it help solve the situation in Mumbai? Unclear. Could the collective attention have been aimed in other ways at the time of the attacks, which would have had a better outcome for people and society? Yes, probably.

The more wired the world gets, the more terrorism can thrive. When our collective attention grows, the risk of collective fear and obsession follows. It is a threat to our collective mental health, one that will only increase unless we introduce some smart self-regulating mechanisms. These could direct our collective attention to the places where collective attention would benefit society instead of harm.

The dynamics between terrorism and journalism is a market failure of the attention economy.

No, I am not supporting government control over the news. Planned economy has proven to not be a solution for market failures. The problem needs to be solved by a smart feedback system. Solutions may lie in new business models for journalism that provide incentives to journalism to generate constructive and proportional attention around issues, empowering people and bringing value to society. Just selling raw eyeballs or Internet traffic by the pound to advertisers is a recipe for market failure in the attention economy. So perhaps it is not all bad that the traditional raw eyeball business models are being re-examined. It is a good time for researchers to look at how different journalism business models generate different sorts of collective attention, and how that drives our collective intelligence. Really good business models for journalism bring prosperity to the journalism industry, its audience, and the society it works in.

For sound new business models to arise, journalism needs to come to grips with its inevitable role as an actor. Instead of discussing why journalists should not get involved with sources or become parts of the stories they tell, perhaps the solution is for journalists to discuss why they should get involved. Journalists must find a way to do so without loosing the essence of journalism.

Ulrik Haagerup is the leader of the Danish National Public News Service, DR News. He is tired of seeing ‘bad news makes good news and good news makes bad news’. Haagerup is promoting the concept of “constructive journalism”, which focuses on enabling people to improve their lives and societies. Journalism can still be critical, independent and kick butt.

The key issue Haagerup pushes is that it is not enough to show the problem and the awfulness of horrible situations. That only feeds collective obsession, neurosis and, ultimately, depression. Journalism must cover problems from the perspective of how they can be solved. Then our collective attention can be very constructive. Constructive journalism will look for all kinds of possible solutions, comparing and scrutinizing them, finding relevant examples and involving the stakeholders in the process of finding solutions.

I will be working with Haagerup this summer, we will be presenting together with Willi Rütten of the European Journalism Centre a workshop on ‘constructive innovation journalism’ at the Deutsche Welle Global Media Summit, 3–5 June 2009.