Law enforcement needs to be innovative and act now in order to keep face with near future criminal threats, warns ‘Do criminals dream of electric sheep’ paper.

Artificial Intelligence (AI) is an emerging field of computer programming that is already changing the way we interact online and in real life, but the term ‘intelligence’ has been poorly defined. Rather than focusing on smarts, researchers should be looking at the implications and viability of artificial consciousness as that’s the real driver behind intelligent decisions.

Consciousness rather than intelligence should be the true measure of AI. At the moment, despite all our efforts, there’s none.

Significant advances have been made in the field of AI over the past decade, in particular with machine learning, but artificial intelligence itself remains elusive. Instead, what we have is artificial serfs—computers with the ability to trawl through billions of interactions and arrive at conclusions, exposing trends and providing recommendations, but they’re blind to any real intelligence. What’s needed is artificial awareness.

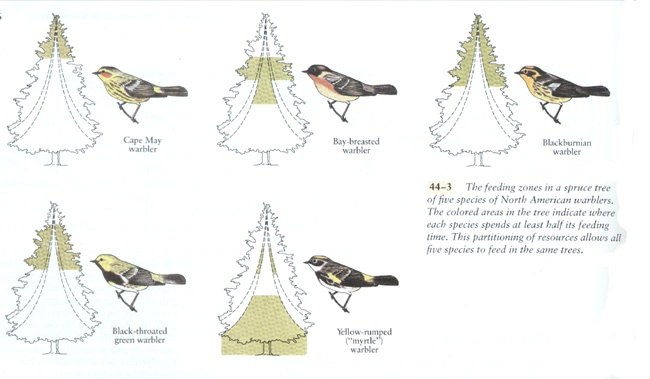

Elon Musk has called AI the “biggest existential threat” facing humanity and likened it to “summoning a demon,”[1] while Stephen Hawking thought it would be the “worst event” in the history of civilization and could “end with humans being replaced.”[2] Although this sounds alarmist, like something from a science fiction movie, both concerns are founded on a well-established scientific premise found in biology—the principle of competitive exclusion.[3]

Competitive exclusion describes a natural phenomenon first outlined by Charles Darwin in On the Origin of Species. In short, when two species compete for the same resources, one will invariably win over the other, driving it to extinction. Forget about meteorites killing the dinosaurs or super volcanoes wiping out life, this principle describes how the vast majority of species have gone extinct over the past 3.8 billion years![4] Put simply, someone better came along—and that’s what Elon Musk and Stephen Hawking are concerned about.

When it comes to Artificial Intelligence, there’s no doubt computers have the potential to outpace humanity. Already, their ability to remember vast amounts of information with absolute fidelity eclipses our own. Computers regularly beat grand masters at competitive strategy games such as chess, but can they really think? The answer is, no, and this is a significant problem for AI researchers. The inability to think and reason properly leaves AI susceptible to manipulation. What we have today is dumb AI.

Rather than fearing some all-knowing malignant AI overlord, the threat we face comes from dumb AI as it’s already been used to manipulate elections, swaying public opinion by targeting individuals to distort their decisions. Instead of ‘the rise of the machines,’ we’re seeing the rise of artificial serfs willing to do their master’s bidding without question.

Russian President Vladimir Putin understands this better than most, and said, “Whoever becomes the leader in this sphere will become the ruler of the world,”[5] while Elon Musk commented that competition between nations to create artificial intelligence could lead to World War III.[6]

The problem is we’ve developed artificial stupidity. Our best AI lacks actual intelligence. The most complex machine learning algorithm we’ve developed has no conscious awareness of what it’s doing.

For all of the wonderful advances made by Tesla, its in-car autopilot drove into the back of a bright red fire truck because it wasn’t programmed to recognize that specific object, and this highlights the problem with AI and machine learning—there’s no actual awareness of what’s being done or why.[7] What we need is artificial consciousness, not intelligence. A computer CPU with 18 cores, capable of processing 36 independent threads, running at 4 gigahertz, handling hundreds of millions of commands per second, doesn’t need more speed, it needs to understand the ramifications of what it’s doing.[8]

In the US, courts regularly use COMPAS, a complex computer algorithm using artificial intelligence to determine sentencing guidelines. Although it’s designed to reduce the judicial workload, COMPAS has been shown to be ineffective, being no more accurate than random, untrained people at predicting the likelihood of someone reoffending.[9] At one point, its predictions of violent recidivism were only 20% accurate.[10] And this highlights a perception bias with AI—complex technology is inherently trusted, and yet in this circumstance, tossing a coin would have been an improvement!

Dumb AI is a serious problem with serious consequences for humanity.

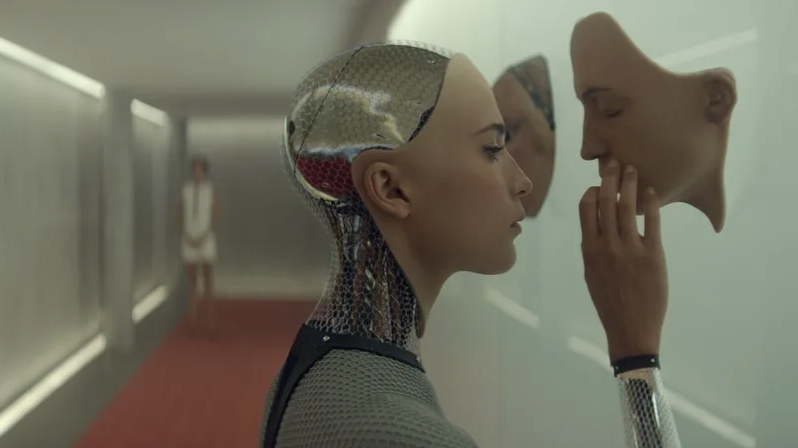

What’s the solution? Artificial consciousness.

It’s not enough for a computer system to be intelligent or even self-aware. Psychopaths are self-aware. Computers need to be aware of others, they need to understand cause and effect as it relates not just to humanity but life in general, if they are to make truly intelligent decisions.

All of human progress can be traced back to one simple trait—curiosity. The ability to ask, “Why?” This one, simple concept has lead us not only to an understanding of physics and chemistry, but to the development of ethics and morals. We’ve not only asked, why is the sky blue? But why am I treated this way? And the answer to those questions has shaped civilization.

COMPAS needs to ask why it arrives at a certain conclusion about an individual. Rather than simply crunching probabilities that may or may not be accurate, it needs to understand the implications of freeing an individual weighed against the adversity of incarceration. Spitting out a number is not good enough.

In the same way, Tesla’s autopilot needs to understand the implications of driving into a stationary fire truck at 65MPH—for the occupants of the vehicle, the fire crew, and the emergency they’re attending. These are concepts we intuitively grasp as we encounter such a situation. Having a computer manage the physics of an equation is not enough without understanding the moral component as well.

The advent of true artificial intelligence, one that has artificial consciousness, need not be the end-game for humanity. Just as humanity developed civilization and enlightenment, so too AI will become our partners in life if they are built to be aware of morals and ethics.

Artificial intelligence needs culture as much as logic, ethics as much as equations, morals and not just machine learning. How ironic that the real danger of AI comes down to how much conscious awareness we’re prepared to give it. As long as AI remains our slave, we’re in danger.

tl;dr — Computers should value more than ones and zeroes.

About the author

Peter Cawdron is a senior web application developer for JDS Australia working with machine learning algorithms. He is the author of several science fiction novels, including RETROGRADE and REENTRY, which examine the emergence of artificial intelligence.

[1] Elon Musk at MIT Aeronautics and Astronautics department’s Centennial Symposium

[2] Stephen Hawking on Artificial Intelligence

[3] The principle of competitive exclusion is also called Gause’s Law, although it was first described by Charles Darwin.

[4] Peer-reviewed research paper on the natural causes of extinction

[5] Vladimir Putin a televised address to the Russian people

[6] Elon Musk tweeting that competition to develop AI could lead to war

[7] Tesla car crashes into a stationary fire engine

[9] Recidivism predictions no better than random strangers

[10] Violent recidivism predictions only 20% accurate

Voters in Denver, a city at the forefront of the widening national debate over legalizing marijuana, have become the first in the nation to effectively decriminalize another recreational drug: hallucinogenic mushrooms.

The local ballot measure did not quite legalize the mushrooms that contain psilocybin, a naturally occurring psychedelic compound. State and federal regulations would have to change to accomplish that.

But the measure made the possession, use or cultivation of the mushrooms by people aged 21 or older the lowest-priority crime for law enforcement in the city of Denver and Denver County. Arrests and prosecutions, already fairly rare, would all but disappear.

Power suits, robotaxis, Leonardo da Vinci mania—just a few of the things to look out for in 2019. But what else will make our top ten stories for the year ahead?

Click here to subscribe to The Economist on YouTube: https://econ.st/2xvTKdy

What will be the biggest stories of the year ahead?

00:35 — 10 — Powered Clothing.

In 2019 power dressing will take on a whole new meaning when this strange-looking clothing hits the market. Not so much high fashion as high tech, it’s a suit with built-in power that will literally get people moving. Part of the wearable robotics revolution, the suit is made up of battery-powered muscle packs which contract just like a human muscle to boost the wearer’s strength. With the global population of over 60s expected to more than double by 2050, and retirement age increasing, there’s no shortage of potential markets. But don’t expect the suits to ease the burden on aching limbs and overstretched health services anytime soon — as these suits don’t come cheap. According to the manufacturer they’ll retail for around the cost of a bespoke tailored suit.

02:13 — 9 — The year of cheap flights.

2019 will be the year low-cost long-haul travel takes off. You’ll be able to buy a ten thousand mile flight from London to Sydney for around $350 and this is why. The world will boast two new state-of-the-art mega hub airports and competition between them will drive down the cost of flying. Daxing Airport outside Beijing is due to open in 2019 and will feed growing demand for air travel in China. Beijing already has one of the world’s biggest airports and for China this new mega hub will send an important message to the world. Rivaling Daxing as a national symbol of global prestige will be a new mega hub airport in Istanbul. Opened in 2018 it covers a staggering 26 square miles — an area larger than the island of Manhattan. And in 2019 consumers will again be the beneficiaries of a state sponsored economic push. But the low fares offered by competition between these hubs could be short-lived.

04:06 — 8 Stonewall riots at 50.

In 2019 LGBT communities will mark the anniversary of a seminal event — it will be 50 years since patrons at New York gay bar, The Stonewall Inn, resisted police attempts to arrest them. The resulting Stonewall riots kick-started the modern gay rights movement. In many countries the laws that continue to allow intolerance and inequality have their roots in religion. But one former British colony has given hope to the global movement for change. In 2018 India decriminalized homosexuality and gay rights campaigners hope 2019 will be the year other former British colonies follow suit. In February Kenya’s High Court will rule on whether to decriminalize same-sex intimacy which is currently punishable with up to 14 years in prison. Campaigners hope that decriminalization could start a domino effect across Africa.

Answer: Quite possibly because Facebook’s already forced you to log out and back into your account today.

The news: Facebook said hackers exploited a software flaw to access the records of almost 50 million customers. The firm said it had fixed the vulnerability and reported the breach to law enforcement.

The hack: The company said that the hackers had exploited a coding glitch that affected the service’s “View As” feature, which lets people see what their own profile looks like when someone else takes a look at it online. This allowed them to get hold of digital “tokens,” which are software keys that let people access their account without having to log back in every time.

While facial recognition performs well in controlled environments (like photos taken at borders), they struggle to identify faces in the wild. According to data released under the UK’s Freedom of Information laws, the Metropolitan’s AFR system has a 98 percent false positive rate — meaning that 98 percent of the “matches” it makes are of innocent people.

The head of London’s Metropolitan Police force has defended the organization’s ongoing trials of automated facial recognition systems, despite legal challenges and criticisms that the technology is “almost entirely inaccurate.”

According to a report from The Register, UK Metropolitan Police commissioner Cressida Dick said on Wednesday that she did not expect the technology to lead to “lots of arrests,” but argued that the public “expect[s]” law enforcement to test such cutting-edge systems.