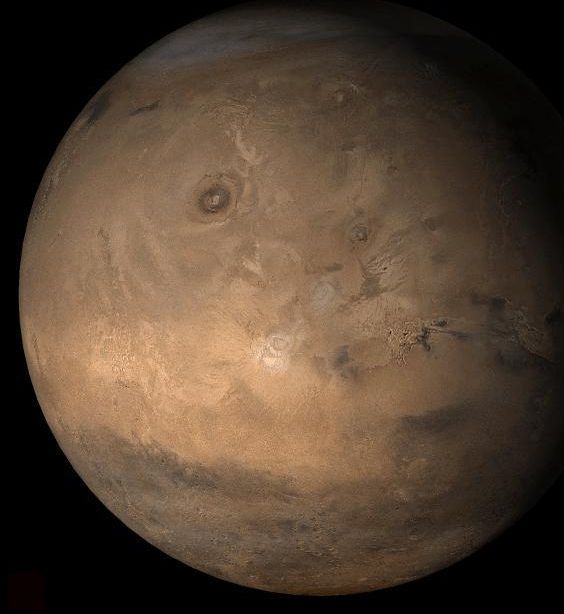

Mars has to have its own internet because no one’s going to wait 20 minutes for a download.

Elon Musk’s multibillion-dollar Mars rocket explained.

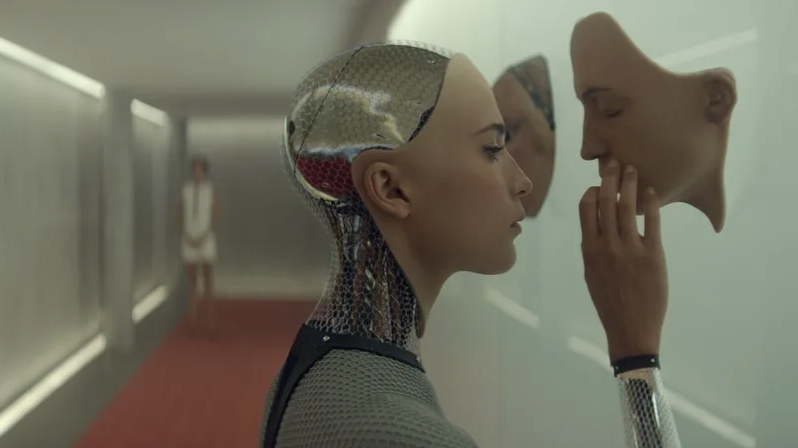

Artificial Intelligence (AI) is an emerging field of computer programming that is already changing the way we interact online and in real life, but the term ‘intelligence’ has been poorly defined. Rather than focusing on smarts, researchers should be looking at the implications and viability of artificial consciousness as that’s the real driver behind intelligent decisions.

Consciousness rather than intelligence should be the true measure of AI. At the moment, despite all our efforts, there’s none.

Significant advances have been made in the field of AI over the past decade, in particular with machine learning, but artificial intelligence itself remains elusive. Instead, what we have is artificial serfs—computers with the ability to trawl through billions of interactions and arrive at conclusions, exposing trends and providing recommendations, but they’re blind to any real intelligence. What’s needed is artificial awareness.

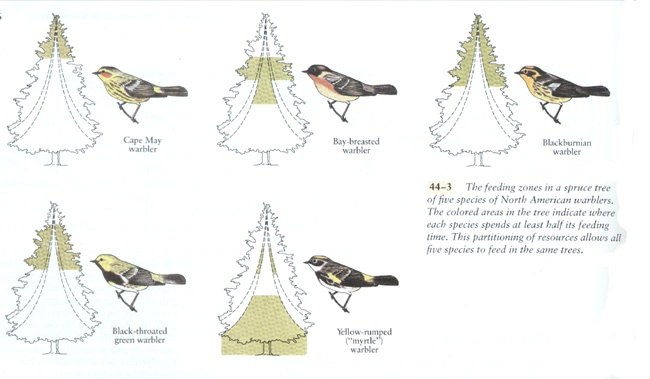

Elon Musk has called AI the “biggest existential threat” facing humanity and likened it to “summoning a demon,”[1] while Stephen Hawking thought it would be the “worst event” in the history of civilization and could “end with humans being replaced.”[2] Although this sounds alarmist, like something from a science fiction movie, both concerns are founded on a well-established scientific premise found in biology—the principle of competitive exclusion.[3]

Competitive exclusion describes a natural phenomenon first outlined by Charles Darwin in On the Origin of Species. In short, when two species compete for the same resources, one will invariably win over the other, driving it to extinction. Forget about meteorites killing the dinosaurs or super volcanoes wiping out life, this principle describes how the vast majority of species have gone extinct over the past 3.8 billion years![4] Put simply, someone better came along—and that’s what Elon Musk and Stephen Hawking are concerned about.

When it comes to Artificial Intelligence, there’s no doubt computers have the potential to outpace humanity. Already, their ability to remember vast amounts of information with absolute fidelity eclipses our own. Computers regularly beat grand masters at competitive strategy games such as chess, but can they really think? The answer is, no, and this is a significant problem for AI researchers. The inability to think and reason properly leaves AI susceptible to manipulation. What we have today is dumb AI.

Rather than fearing some all-knowing malignant AI overlord, the threat we face comes from dumb AI as it’s already been used to manipulate elections, swaying public opinion by targeting individuals to distort their decisions. Instead of ‘the rise of the machines,’ we’re seeing the rise of artificial serfs willing to do their master’s bidding without question.

Russian President Vladimir Putin understands this better than most, and said, “Whoever becomes the leader in this sphere will become the ruler of the world,”[5] while Elon Musk commented that competition between nations to create artificial intelligence could lead to World War III.[6]

The problem is we’ve developed artificial stupidity. Our best AI lacks actual intelligence. The most complex machine learning algorithm we’ve developed has no conscious awareness of what it’s doing.

For all of the wonderful advances made by Tesla, its in-car autopilot drove into the back of a bright red fire truck because it wasn’t programmed to recognize that specific object, and this highlights the problem with AI and machine learning—there’s no actual awareness of what’s being done or why.[7] What we need is artificial consciousness, not intelligence. A computer CPU with 18 cores, capable of processing 36 independent threads, running at 4 gigahertz, handling hundreds of millions of commands per second, doesn’t need more speed, it needs to understand the ramifications of what it’s doing.[8]

In the US, courts regularly use COMPAS, a complex computer algorithm using artificial intelligence to determine sentencing guidelines. Although it’s designed to reduce the judicial workload, COMPAS has been shown to be ineffective, being no more accurate than random, untrained people at predicting the likelihood of someone reoffending.[9] At one point, its predictions of violent recidivism were only 20% accurate.[10] And this highlights a perception bias with AI—complex technology is inherently trusted, and yet in this circumstance, tossing a coin would have been an improvement!

Dumb AI is a serious problem with serious consequences for humanity.

What’s the solution? Artificial consciousness.

It’s not enough for a computer system to be intelligent or even self-aware. Psychopaths are self-aware. Computers need to be aware of others, they need to understand cause and effect as it relates not just to humanity but life in general, if they are to make truly intelligent decisions.

All of human progress can be traced back to one simple trait—curiosity. The ability to ask, “Why?” This one, simple concept has lead us not only to an understanding of physics and chemistry, but to the development of ethics and morals. We’ve not only asked, why is the sky blue? But why am I treated this way? And the answer to those questions has shaped civilization.

COMPAS needs to ask why it arrives at a certain conclusion about an individual. Rather than simply crunching probabilities that may or may not be accurate, it needs to understand the implications of freeing an individual weighed against the adversity of incarceration. Spitting out a number is not good enough.

In the same way, Tesla’s autopilot needs to understand the implications of driving into a stationary fire truck at 65MPH—for the occupants of the vehicle, the fire crew, and the emergency they’re attending. These are concepts we intuitively grasp as we encounter such a situation. Having a computer manage the physics of an equation is not enough without understanding the moral component as well.

The advent of true artificial intelligence, one that has artificial consciousness, need not be the end-game for humanity. Just as humanity developed civilization and enlightenment, so too AI will become our partners in life if they are built to be aware of morals and ethics.

Artificial intelligence needs culture as much as logic, ethics as much as equations, morals and not just machine learning. How ironic that the real danger of AI comes down to how much conscious awareness we’re prepared to give it. As long as AI remains our slave, we’re in danger.

tl;dr — Computers should value more than ones and zeroes.

About the author

Peter Cawdron is a senior web application developer for JDS Australia working with machine learning algorithms. He is the author of several science fiction novels, including RETROGRADE and REENTRY, which examine the emergence of artificial intelligence.

[1] Elon Musk at MIT Aeronautics and Astronautics department’s Centennial Symposium

[2] Stephen Hawking on Artificial Intelligence

[3] The principle of competitive exclusion is also called Gause’s Law, although it was first described by Charles Darwin.

[4] Peer-reviewed research paper on the natural causes of extinction

[5] Vladimir Putin a televised address to the Russian people

[6] Elon Musk tweeting that competition to develop AI could lead to war

[7] Tesla car crashes into a stationary fire engine

[9] Recidivism predictions no better than random strangers

[10] Violent recidivism predictions only 20% accurate

When Elon Musk and DARPA both hop aboard the cyborg hypetrain, you know brain-machine interfaces (BMIs) are about to achieve the impossible.

BMIs, already the stuff of science fiction, facilitate crosstalk between biological wetware with external computers, turning human users into literal cyborgs. Yet mind-controlled robotic arms, microelectrode “nerve patches”, or “memory Band-AIDS” are still purely experimental medical treatments for those with nervous system impairments.

With the Next-Generation Nonsurgical Neurotechnology (N3) program, DARPA is looking to expand BMIs to the military. This month, the project tapped six academic teams to engineer radically different BMIs to hook up machines to the brains of able-bodied soldiers. The goal is to ditch surgery altogether—while minimizing any biological interventions—to link up brain and machine.

Elon Musk’s appearance at Tesla owner-enthusiast Ryan McCaffrey’s Ride the Lightning podcast revealed a number of new details about the electric car maker’s upcoming halo vehicle, the next-generation Roadster. While addressing the all-electric supercar, Musk discussed the vehicle’s estimated yearly production numbers, its purpose, and some details about its “SpaceX package.”

Ever the candid interviewee, Musk admitted that the next-generation Roadster is really more like a dessert to the Model S, 3, X, and Y’s main course, in the way that its existence will probably not provide much of an impact to Tesla’s overall mission of accelerating the world’s transition to sustainable energy. Nevertheless, the Roadster still has a lot of merits, in the way that it could establish the superiority of pure electric propulsion compared to the internal combustion engine, bar none.

Musk noted that the Roadster is intended to outperform the best “Ferraris, Lamborghinis, and McLarens” on every dimension, on every level, including the track, thereby erasing the halo effect of gas cars. “We’re going to do things with the new Roadster that are kind of unfair to other cars. (It’s) crushingly good relative to the next best gasoline sports car,” Musk said.