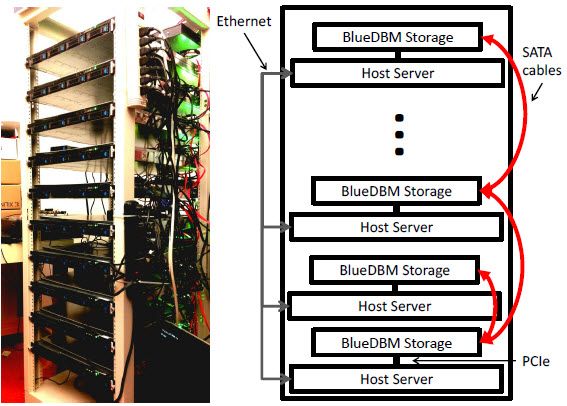

A 20-node BlueDBM Cluster (credit: Sang-Woo Jun et al./ISCA 2015)

There’s a big problem with big data: the huge RAM memory required. Now MIT researchers have developed a new system called “BlueDBM” that should make servers using flash memory as efficient as those using conventional RAM for several common big-data applications, while preserving their power and cost savings.

Here’s the context: Data sets in areas such as genomics, geological data, and daily twitter feeds can be as large as 5TB to 20 TB. Complex data queries in such data sets require high-speed random-access memory (RAM). But that would require a huge cluster with up to 100 servers, each with 128GB to 256GBs of DRAM (dynamic random access memory).

Flash memory (used in smart phones and other portable devices) could provide an alternative to conventional RAM for such applications. It’s about a tenth as expensive, and it consumes about a tenth as much power. The problem: it’s also a tenth as fast.